Locally Coherent Parallel Decoding in Diffusion Language Models

arXiv cs.CL / 3/24/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper discusses diffusion language models (DLMs) as an alternative to autoregressive models, focusing on how discrete DLMs can achieve sub-linear latency via parallel token prediction.

- It identifies a key limitation of standard parallel sampling in DLMs: independent sampling from marginal distributions breaks joint dependencies, causing syntactic inconsistencies and malformed multi-token structures.

- The authors propose CoDiLA (Coherent Diffusion with Local Autoregression), which preserves parallel block generation while enforcing local sequential validity by using a small auxiliary autoregressive model on diffusion latents.

- CoDiLA aims to maintain the core DLM strengths, including bidirectional modeling across blocks, while delegating fine-grained coherence to the auxiliary AR component.

- Experiments show that a compact auxiliary AR model (around 0.6B parameters) can largely eliminate coherence artifacts and yields improved accuracy-speed tradeoffs on code generation benchmarks, claiming a new Pareto frontier.

Related Articles

MCP Is Quietly Replacing APIs — And Most Developers Haven't Noticed Yet

Dev.to

I Built a Self-Healing AI Trading Bot That Learns From Every Failure

Dev.to

Stop Guessing Your API Costs: Track LLM Tokens in Real Time

Dev.to

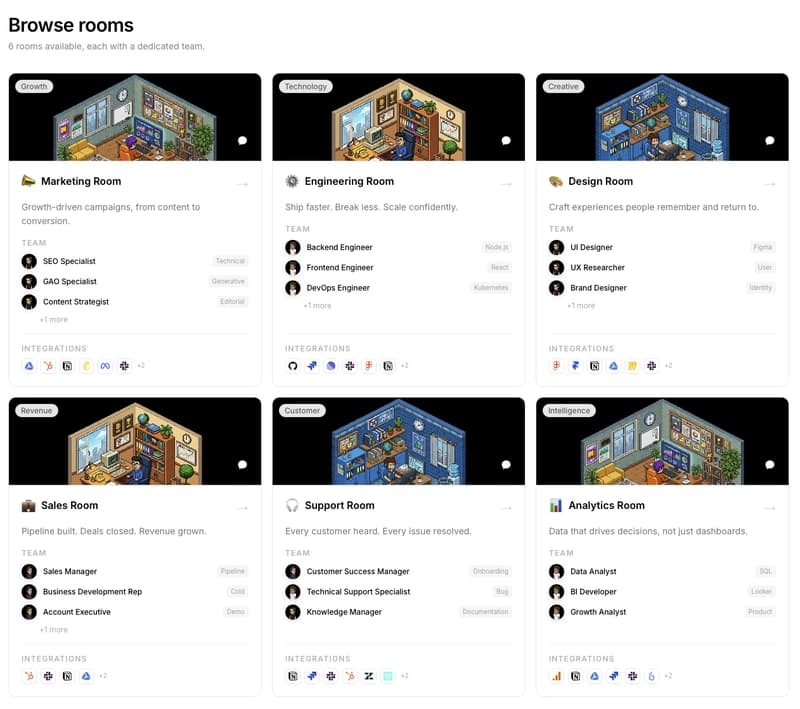

We are building PixelRooms! The marketplace of AI teams for thepixeloffice.ai

Dev.to

Every real estate agent tool worth your time in 2026, ranked and rated

Dev.to