Why MLLMs Struggle to Determine Object Orientations

arXiv cs.CV / 4/16/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper investigates why multimodal large language models (MLLMs) struggle with reasoning about 2D object orientations in images, building on prior hypotheses about visual encoder limitations.

- Using a controlled empirical protocol, the authors test whether orientation information is preserved in encoder embeddings by training linear regressors on features from SigLIP/Vit and CLIP-based setups in LLaVA and Qwen2.5-VL.

- Contrary to the null/accepted hypothesis, the study finds that object orientation can be recovered accurately from encoder representations using simple linear models.

- The results contradict the idea that orientation failures primarily stem from the visual encoder being unable to represent geometric orientation.

- The authors also observe that while orientation information exists, it is distributed diffusely across very large numbers of features, suggesting the issue may lie in how MLLMs/heads exploit or attend to that information rather than whether it is encoded.

Related Articles

The AI Hype Cycle Is Lying to You About What to Learn

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Inside NVIDIA’s $2B Marvell Deal: What NVLink Fusion Means for AI Ethernet Fabrics

Dev.to

Automating Your Literature Review: From PDFs to Data with AI

Dev.to

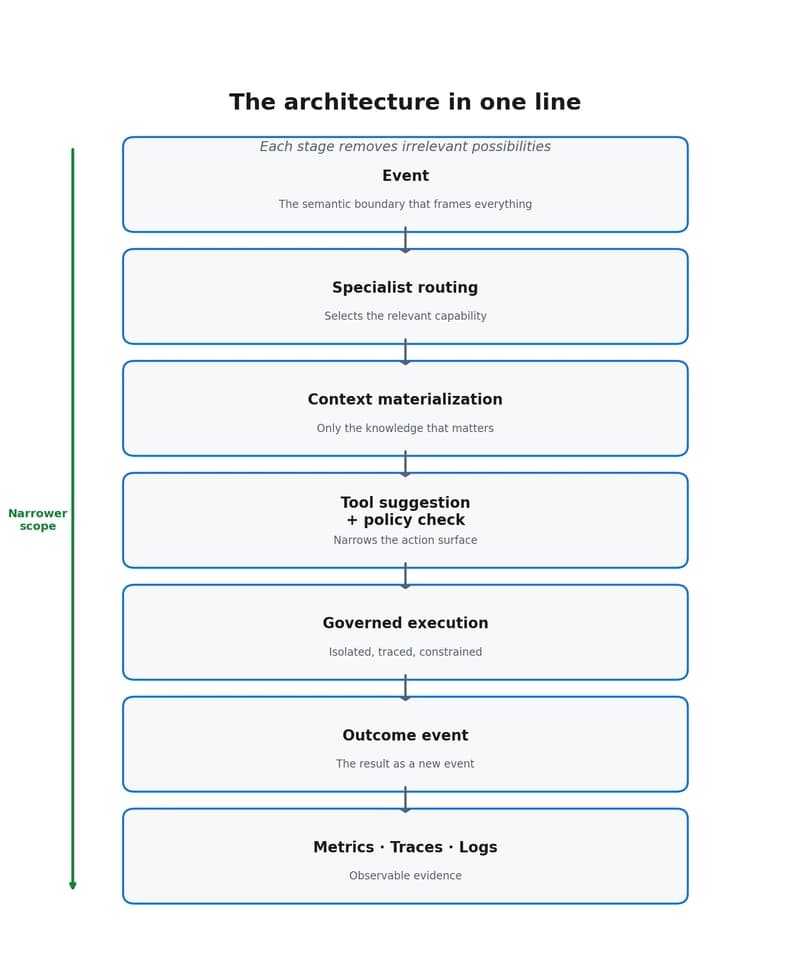

Why event-driven agents reduce scope, cost, and decision dispersion

Dev.to