JigsawRL: Assembling RL Pipelines for Efficient LLM Post-Training

arXiv cs.LG / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- JigsawRL is a cost-efficient RL post-training framework that introduces “Pipeline Multiplexing” as an additional dimension of RL parallelism to better utilize compute during LLM post-training.

- It decomposes RL pipelines into Sub-Stage Graphs to reveal intra-stage and inter-worker imbalances that are obscured by traditional stage-level systems.

- The framework mitigates multiplexing interference via dynamic resource allocation and improves utilization by migrating long-tail rollouts across workers.

- It coordinates migrated rollouts by casting the problem as graph scheduling, solved with a look-ahead heuristic.

- Experiments on 4–64 H100/A100 GPUs show throughput gains of up to 1.85× over Verl (synchronous RL) and 1.54× over StreamRL and AReaL (asynchronous RL), while supporting heterogeneous pipelines with acceptable latency trade-offs.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

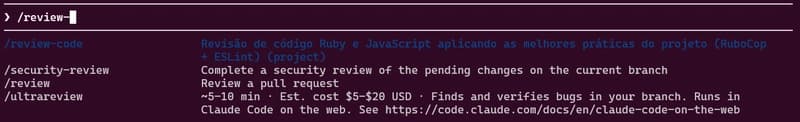

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to