| Just came across this memo from the Office of Science and Technology Policy. Main point seems to be concern around large-scale extraction of model capabilities using proxy accounts and jailbreak techniques. Basically industrialized distillation of frontier models. Feels like this is less about open source directly and more about protecting proprietary models , but the bigger question is If governments start treating model weights and capabilities as strategic assets, where does that leave open models? On one hand, open models drive innovation and accessibility. A lot of progress in this community comes from that openness On the other hand, if capability extraction becomes a national security concern there could be pressure to limit what gets released or how [link] [comments] |

US gov memo on “adversarial distillation” - are we heading toward tighter controls on open models?

Reddit r/LocalLLaMA / 4/24/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisIndustry & Market Moves

Key Points

- A U.S. Office of Science and Technology Policy memo raises concerns about “adversarial distillation,” where proxy accounts and jailbreak techniques are used to industrialize the extraction of a model’s capabilities.

- The discussion suggests the policy focus may be less about “open source” models themselves and more about protecting proprietary or frontier models from capability leakage.

- The memo prompts the broader question of whether governments may start treating model weights and capabilities as strategic national-security assets.

- If capability extraction is deemed a national security risk, the article suggests there could be pressure to restrict what is released or to control how model capabilities are distributed, potentially affecting open models.

- The piece frames a tension between open models that support innovation and accessibility and the possibility of tighter controls if security concerns escalate.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat USA

AI Business

AI productivity tools 2026: top 10 tools for remote teams

Dev.to

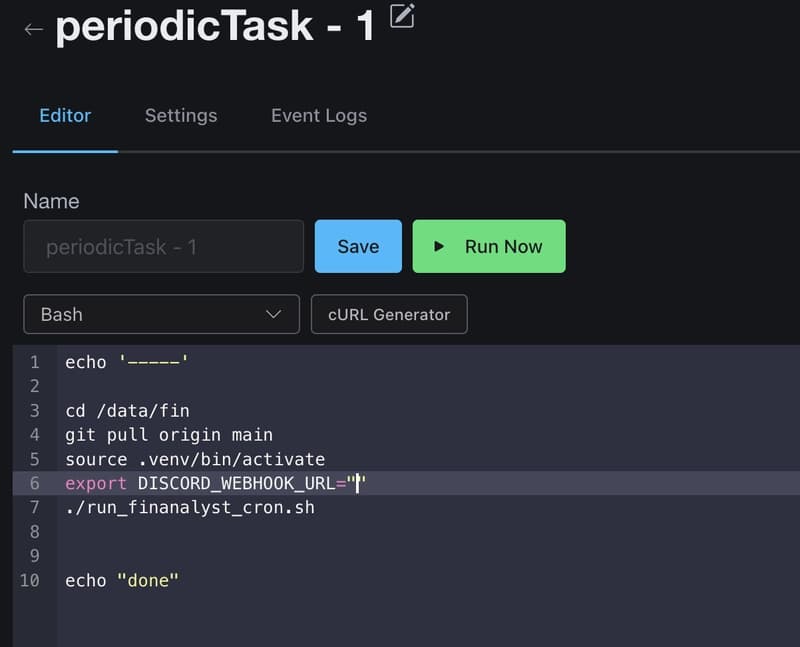

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to