Routing Sensitivity Without Controllability: A Diagnostic Study of Fairness in MoE Language Models

arXiv cs.CL / 3/31/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- Results show routing-level preference shifts are either unachievable in some models (e.g., Mixtral, Qwen1.5, Qwen3), non-robust in others (e.g., DeepSeekMoE), or come with notable utility tradeoffs (e.g., OLMoE exhibits utility drops alongside preference changes).

Related Articles

How to Verify Information Online and Avoid Fake Content

Dev.to

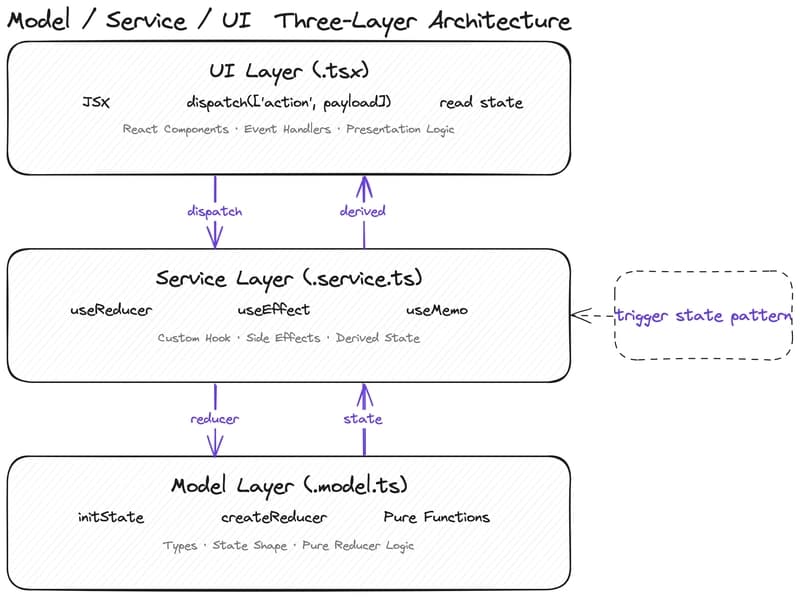

Why Your State Management Is Slowing Down AI-Assisted Development

Dev.to

From Black Box to Trusted Tool: Quality Control for AI in Literature Reviews

Dev.to

Fake users generated by AI can't simulate humans — review of 182 research papers. Your thoughts?

Reddit r/artificial

[D] ICPR Decision Discussion

Reddit r/MachineLearning