Better with Less: Tackling Heterogeneous Multi-Modal Image Joint Pretraining via Conditioned and Degraded Masked Autoencoder

arXiv cs.CV / 4/21/2026

📰 NewsModels & Research

Key Points

- The paper addresses the difficulty of joint pretraining for heterogeneous high-resolution optical and SAR (synthetic aperture radar) images, focusing on a “heterogeneity–resolution paradox” that causes negative transfer when models use rigid alignment.

- It introduces CoDe-MAE, a “better synergy with less alignment” approach that combines multiple techniques to avoid either feature suppression or feature contamination.

- Optical-anchored Knowledge Distillation (OKD) regularizes SAR by mapping speckle/noise toward a cleaner semantic manifold, improving robustness.

- Conditioned Contrastive Learning (CCL) aligns only the shared consensus using a gradient buffering mechanism while preserving meaningful physical differences between modalities.

- Cross-Modal Degraded Reconstruction (CDR) removes non-homologous spectral pseudo-features to make the learning target more well-posed and to capture structural invariants; with 1M pretraining samples, the method claims state-of-the-art results and strong data efficiency versus larger scaled foundation models.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

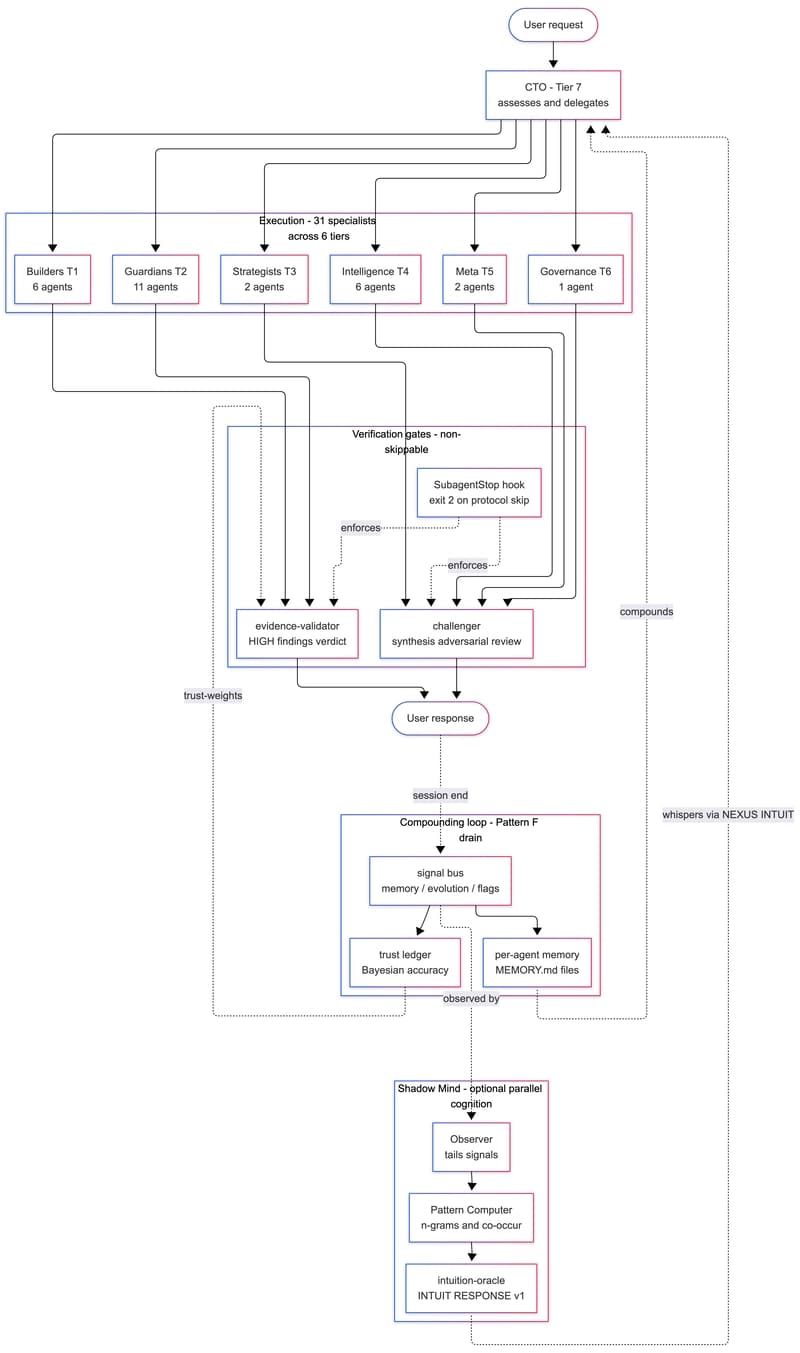

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to