Streamlining generative AI development with MLflow v3.10 on Amazon SageMaker AI

Amazon AWS AI Blog / 5/6/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageIndustry & Market MovesModels & Research

Key Points

- Amazon SageMaker AI MLflow Apps have added support for MLflow 3.10 to enhance generative AI development workflows and improve experiment tracking.

- MLflow 3.10 upgrades tracing and observability for complex multi-turn, agentic workflows, with better integration across popular LLM frameworks and streamlined logging for GenAI interactions.

- A major evaluation improvement is the mlflow.genai.evaluation() API, which enables programmatic and systematic measurement of generative AI quality using metrics such as relevance, faithfulness, correctness, and safety.

- The release strengthens observability with more granular trace filtering/search, richer metadata for debugging and root-cause analysis, and pre-built performance dashboards that surface latency, request volume, quality scores, and token usage/costs.

- The post also covers how to get started with SageMaker AI MLflow Apps and use these enhancements to move generative AI projects from experimentation to production.

Today, we’re excited to announce that Amazon SageMaker AI MLflow Apps now support MLflow version 3.10, bringing enhanced capabilities for generative AI development and streamlined experiment tracking to your generative AI workflows. Building on the foundations established with Amazon SageMaker AI MLflow Apps, this latest version introduces powerful new features for observability, evaluation, and generative […]

Continue reading this article on the original site.

Read original →Related Articles

Black Hat USA

AI Business

Transform Your Blurry Photos into HD Masterpieces, Instantly!

Dev.to

6 New Moats for AI Agent Infrastructure — Trust Score, Deployment, SLA, Identity, Compliance-as-Code

Dev.to

Google Home’s Gemini AI can handle more complicated requests

The Verge

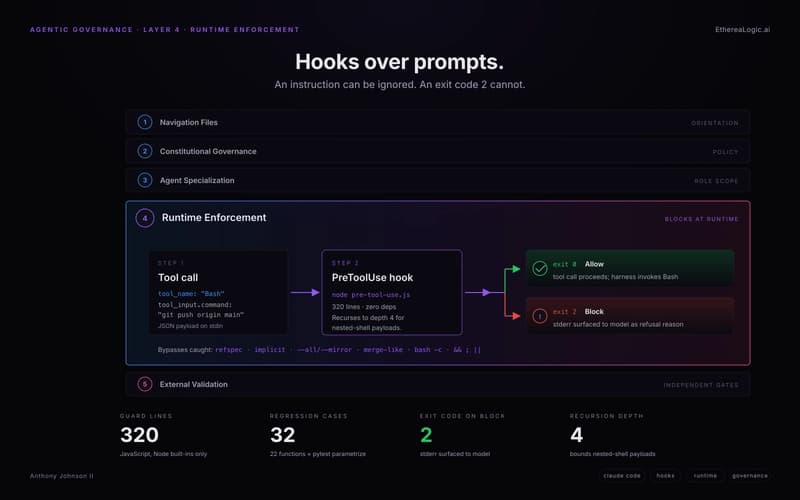

Exit Code 2: How Claude Hooks Turn Agentic Rules Into Runtime Barriers

Dev.to