Generative Augmented Inference

arXiv cs.LG / 4/17/2026

📰 NewsModels & Research

Key Points

- The paper introduces Generative Augmented Inference (GAI), a framework for using LLM-generated outputs as features to estimate models of outcomes that are originally based on expensive human labels.

- Unlike standard proxy approaches that treat AI predictions as direct substitutes for true labels, GAI is designed to remain reliable even when the relationship between AI outputs and human labels is weak, complex, or misspecified.

- Using an orthogonal moment construction, GAI enables consistent estimation and valid inference with flexible, nonparametric relationships between LLM signals and human labels.

- The authors prove asymptotic normality and a “safe default” property: GAI cannot worsen performance versus human-data-only baselines and improves efficiency when auxiliary signals are predictive.

- Experiments in areas like conjoint analysis, retail pricing, and health insurance choice show large reductions in human labeling needs (e.g., 50% error reduction and 75%+ label reduction in conjoint analysis, 90%+ in health insurance) while maintaining or improving decision accuracy and confidence interval coverage.

Related Articles

langchain-anthropic==1.4.1

LangChain Releases

Talk to Your Favorite Game Characters! Mantella Brings AI to Skyrim and Fallout 4 NPCs

Dev.to

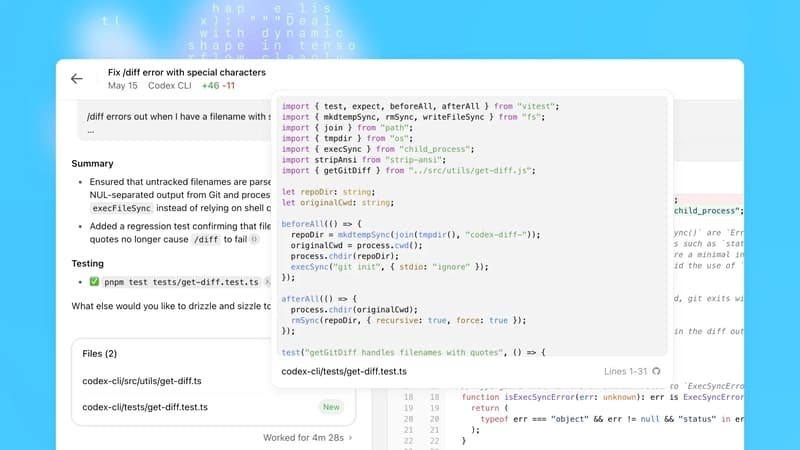

OpenAI Codex Update Adds macOS Agent, Browser, Memory; 3M Weekly Users

Dev.to

1.14.2

CrewAI Releases

Should my enterprise AI agent do that? NanoClaw and Vercel launch easier agentic policy setting and approval dialogs across 15 messaging apps

VentureBeat