Improving Facial Emotion Recognition through Dataset Merging and Balanced Training Strategies

arXiv cs.CV / 4/23/2026

📰 NewsModels & Research

Key Points

- The paper proposes a deep learning framework using deep convolutional networks to automatically recognize facial emotions.

- It improves generalization and robustness by merging three public datasets (CK+, FER+, and KDEF) to expand the training data.

- Even after merging, minority emotion classes remain underrepresented, so the authors apply online/offline augmentation and random weighted sampling to reduce data imbalance.

- Experiments report 82% accuracy for recognizing seven basic emotions, indicating the approach effectively addresses class imbalance and improves performance.

Related Articles

Why don't Automatic speech Recognition models use prompting? [D]

Reddit r/MachineLearning

CoTracker3: Simpler and Better Point Tracking by Pseudo-Labelling Real Videos

Dev.to

My AI Agent Over-Corrected Itself — So I Built Metabolic Regulation

Dev.to

Building Agent Arena: Using Valkey as the Nervous System for Multi-Agent AI

Dev.to

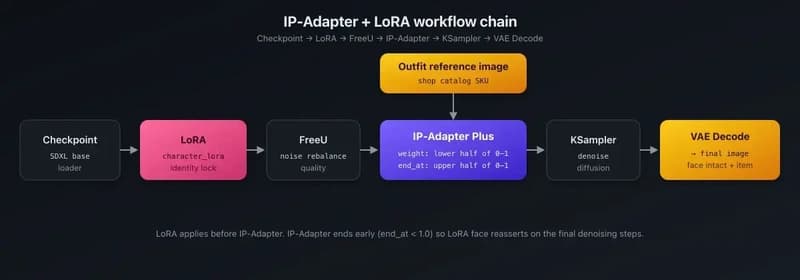

IP-Adapter + LoRA for product catalog rendering — putting shop items on AI characters

Dev.to