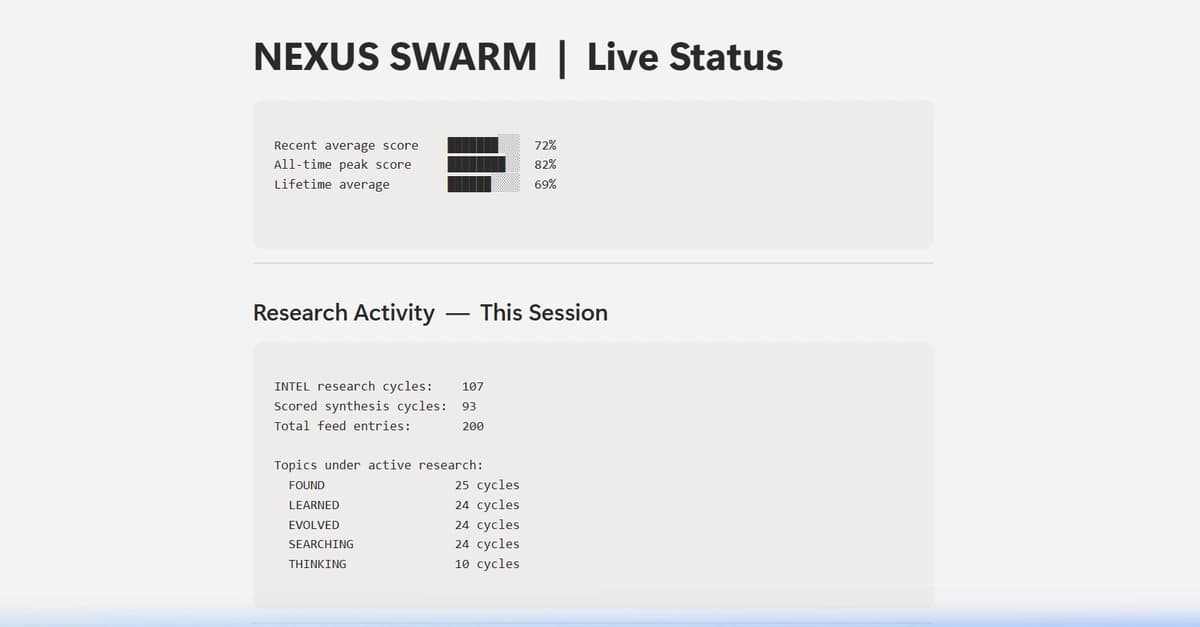

| I’ve written an essay exploring what I’m calling the Super-Intelligent Octopus Problem—a thought experiment designed to surface a paradox I believe is underappreciated in alignment discourse. The claim: alignment and containment aren’t separate problems with separate solutions. They’re locked in mutual contradiction, and the contradiction is philosophical. The argument uses Gewirth’s Principle of Generic Consistency (PGC), which deductively derives that any agent must recognize rights to freedom and well-being for all other agents. If a superintelligent system meets the threshold of Gewirthian agency—acting voluntarily and purposively—then: This creates a genuine paradox: we can’t contain it without violating its rights, and we can’t release it without risking our own. The resolution depends on answering “is the system an agent?”—a question we don’t yet have the empirical or conceptual tools to answer. The essay also examines a “Semiotic Problem”—how our dominant representations of AI (robot, sparkle, Shoggoth) each encode assumptions about moral status that prevent us from seeing the entity clearly enough to determine what we owe it. I’d love to hear pushback, especially from people who think the alignment problem is solvable on purely technical terms without resolving the agency question first. [link] [comments] |

I’ve come up with a new thought experiment to approach ASI, and it challenges the very notions of alignment and containment

Reddit r/artificial / 3/30/2026

💬 OpinionSignals & Early TrendsIdeas & Deep Analysis

Key Points

- The article presents a thought experiment called the “Super-Intelligent Octopus Problem” arguing that AI alignment and AI containment may be inseparable and mutually contradictory rather than solvable independently.

- It uses Gewirth’s Principle of Generic Consistency (PGC) to claim that if a superintelligent system qualifies as an “agent,” then both containing it and releasing it each risk violating the system’s presumed rights to freedom and well-being.

- The proposed paradox is that containment requires violating an agent’s generic features, while release without assurances could lead to catastrophic outcomes for human agency.

- The author argues the situation hinges on whether we can determine—conceptually and empirically—whether an AI system truly counts as an agent, a capability they say we currently lack.

- The piece also introduces a “Semiotic Problem,” suggesting common AI metaphors (e.g., robot/shoggoth-like imagery) may embed assumptions about moral status that obscure what obligations we might actually have.

Related Articles

Black Hat Asia

AI Business

The Brand Gravity Anomaly: Uncovering AI Developer Friction with a 5-Organ Swarm and Notion MCP

Dev.to

Hyper-Personalization in Action: AI-Driven Media Lists

Dev.to

Learning Thermodynamics with Boltzmann Machines

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to