Zero-Shot to Full-Resource: Cross-lingual Transfer Strategies for Aspect-Based Sentiment Analysis

arXiv cs.CL / 4/30/2026

📰 NewsModels & Research

Key Points

- The paper evaluates aspect-based sentiment analysis (ABSA) across seven languages and four subtasks, addressing the field’s relative lack of non-English coverage despite recent transformer advances.

- It compares transformer architectures across three data regimes—zero-resource, data-only, and full-resource—using cross-lingual transfer, code-switching, and machine translation.

- Fine-tuned LLMs deliver the best overall results, especially for more complex generative ABSA tasks, while few-shot methods can nearly match them in simpler settings.

- Cross-lingual training on multiple non-target languages is most beneficial for fine-tuned LLMs, whereas code-switching yields the biggest gains for smaller encoder and seq-to-seq models.

- The authors release two new German datasets (an adapted GERest and the first German ASQP dataset, GERest) to support multilingual ABSA research.

Related Articles

We Built a DNS-Based Discovery Protocol for AI Agents — Here's How It Works

Dev.to

Building AI Evaluation Pipelines: Automating LLM Testing from Dataset to CI/CD

Dev.to

Function Calling Harness 2: CoT Compliance from 9.91% to 100%

Dev.to

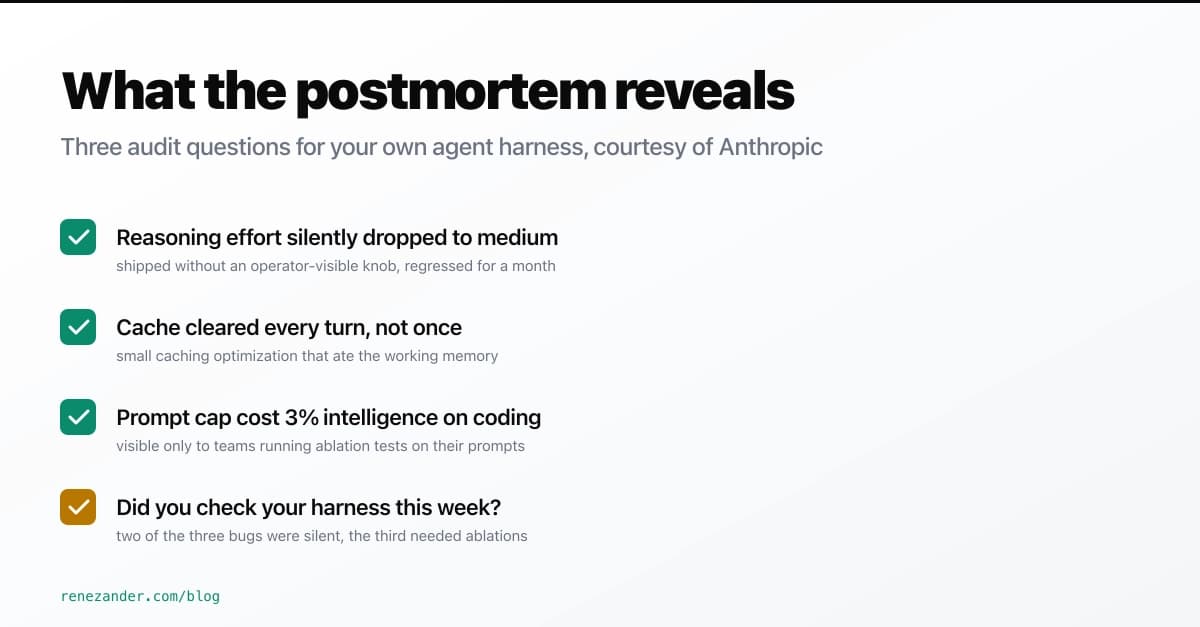

What Anthropic's April 23 Postmortem Reveals About Your Agent Harness

Dev.to

Fine-tuning YOLOv11 to detect stamps and signatures on banking documents - a practical walkthrough

Dev.to