Event-based SLAM Benchmark for High-Speed Maneuvers

arXiv cs.RO / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- The paper argues that current event-based SLAM/odometry methods, while reducing motion blur, have important gaps in handling arbitrary aggressive maneuvers, especially beyond limited assumptions like constant visibility or pure 3-DoF rotations.

- It analyzes state-of-the-art event-based visual odometry and visual-inertial odometry approaches and identifies shortcomings in existing public datasets regarding the realism and coverage of aggressive motion and sensing conditions.

- To address this, the authors introduce EvSLAM, an event-based benchmarking framework that defines high-speed maneuvers rigorously and includes diverse platforms, extreme lighting, and challenging motion patterns.

- The framework also proposes a new evaluation metric intended to fairly measure the operational limits of event-based solutions and to reveal which architectures perform best under these stress cases.

Related Articles

Write a 1,200-word blog post: "What is Generative Engine Optimization (GEO) and why SEO teams need it now"

Dev.to

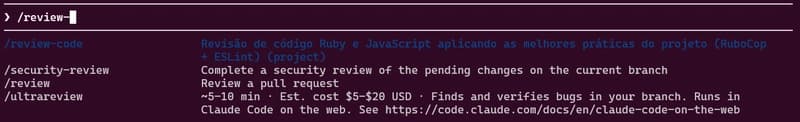

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Indian Developers: How to Build AI Side Income with $0 Capital in 2026

Dev.to

Most People Use AI Like Google. That's Why It Sucks.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to