Co-distilled attention guided masked image modeling with noisy teacher for self-supervised learning on medical images

arXiv cs.CV / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper proposes an attention-guided masking strategy for masked image modeling (MIM) tailored to medical images, aiming to reduce information leakage caused by random masking in locally similar contexts.

- Because Swin transformers lack a global [CLS] token, the authors introduce a co-distillation framework that selectively masks semantically co-occurring, discriminative patches to make self-supervised pretraining harder and more effective.

- The authors identify a key limitation of attention-guided masking—reduced diversity across attention heads—which can hurt downstream performance.

- To overcome this, they introduce a “noisy teacher” mechanism (DAGMaN) within the co-distillation setup to maintain high attention-head diversity while still performing attentive masking.

- Experiments across multiple medical imaging tasks (lung nodule classification, immunotherapy outcome prediction, tumor segmentation, and organs clustering) demonstrate DAGMaN’s effectiveness as a self-supervised learning approach.

Related Articles

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

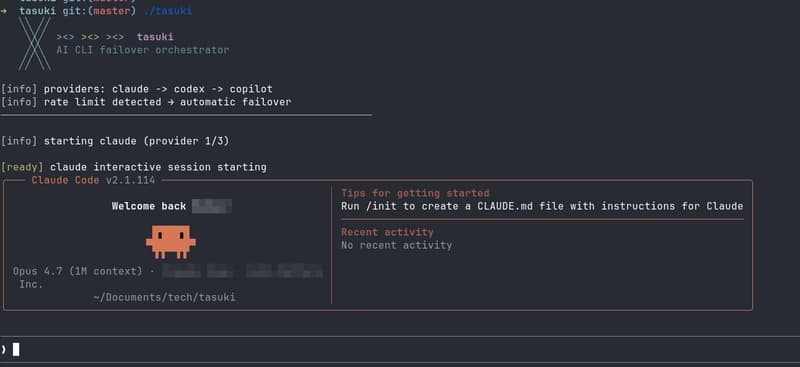

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial

I Built an Open‑ Source OS for AI Agents – And It’s Ready for You

Dev.to

Kiwi-chan's Log: The Great Log Acquisition Struggle

Dev.to