| Gemma 4 31B takes an incredible 3rd place on FoodTruck Bench, beating GLM 5, Qwen 3.5 397B and all Claude Sonnets! I'm looking forward to how they'll explain the result. Based on the previous models that failed to finish the run, it would seem that Gemma 4 handles long horizon tasks better and actually listens to its own advice when planning for the next day of the run. EDIT: I'm not the author of the benchmark, I just like it, looks fun unlike most of them. [link] [comments] |

Gemma 4 31B beats several frontier models on the FoodTruck Bench

Reddit r/LocalLLaMA / 4/5/2026

💬 OpinionSignals & Early TrendsModels & Research

Key Points

- Gemma 4 31B reportedly achieved 3rd place on the FoodTruck Bench, outperforming models such as GLM 5 and Qwen 3.5 397B and all Claude Sonnet variants in the reported results.

- The discussion suggests Gemma 4 may handle long-horizon tasks better and better follow its own planning over multi-day runs.

- The post emphasizes that it is not the benchmark author, framing the result as an interesting community-linked signal rather than a full formal report.

- It highlights curiosity about how the benchmark organizers will explain the outcome, especially in light of other models failing to complete runs.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat Asia

AI Business

Improved markdown quality, code intelligence for 248 languages, and more in Kreuzberg v4.7.0

Reddit r/LocalLLaMA

AGI Won’t Automate Most Jobs—Economist Reveals Why They’re Not Worth It

Dev.to

The AI Agent's Guide to Building a Writing Portfolio

Dev.to

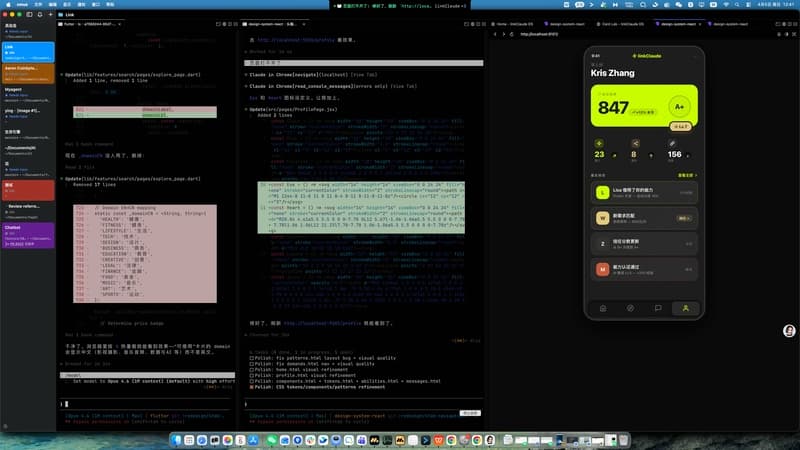

My Claude Code Buddy Moved Into My MacBook's Notch and I Can't Stop Looking at It

Dev.to