HAVEN: Hierarchical Adversary-aware Visibility-Enabled Navigation with Cover Utilization using Deep Transformer Q-Networks

arXiv cs.RO / 4/21/2026

💬 OpinionDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper addresses autonomous navigation in partially observable environments by explicitly reasoning over occlusion and limited fields of view rather than relying only on immediate sensor readings.

- It proposes a hierarchical framework that uses a Deep Transformer Q-Network (DTQN) to select high-level subgoals from short task-aware histories and a modular low-level controller to execute the chosen waypoints.

- The DTQN’s candidate subgoal generation is made visibility-aware through masking and exposure penalties that encourage using cover and anticipating safety.

- The low-level component uses a potential-field controller to track the selected subgoal while performing smooth short-horizon obstacle avoidance.

- Experiments in 2D simulation and a 3D Unity-ROS setup (via point-cloud projection into the same feature schema) show improved success rate, safety margins, and time-to-goal versus classical planners and RL baselines, with ablations supporting the value of temporal memory and visibility-aware design.

Related Articles

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

Building a website with Replit and Vercel

Dev.to

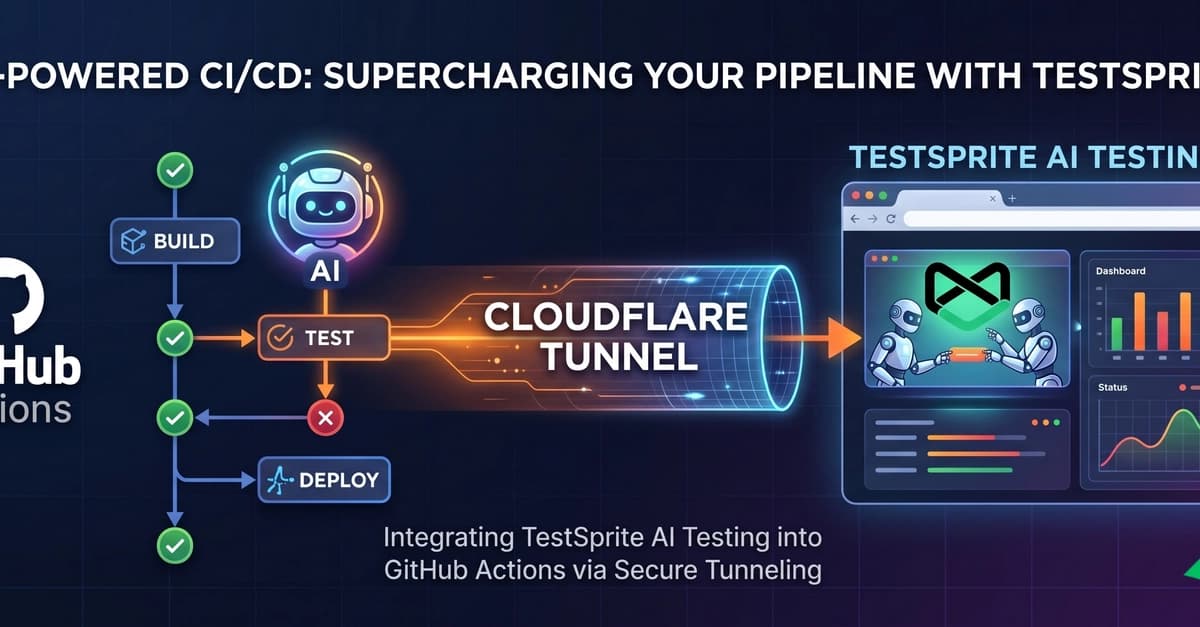

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to

Kiwi-chan Devlog #007: The Audit Never Sleeps (and Neither Does My GPU)

Dev.to