How to Buy Cheap Claude Tokens in China

The Transfer Station Economy, Explained

Zilan Qian is a research associate (research) at the Oxford China Policy Lab and holds a Master’s degree in Social Science of the Internet from the University of Oxford.

On April 23, 2026, the White House released a memo warning that Chinese entities were running “industrial-scale” distillation campaigns against American frontier AI models, leveraging “tens of thousands of proxy accounts” to evade detection. In February 2026, Anthropic similarly reported on Chinese labs’ coordinated distillation attacks using “a single proxy network managed more than 20,000 fraudulent accounts”. Both cases see “proxy” — the middlemen between model users and model providers — as a purposeful design by a selective Chinese frontier labs to systematically extract US AI models.

Regardless of whether Chinese labs rely on distillation to “catch up”, both documents misread the proxy economy they’re describing. Underneath the handful of labs sits a much larger market, one that has been operating in public on GitHub, Taobao, Twitter, and Telegram. It is a grey economy of API proxies (commonly called “transfer stations,” 中转站) that lets Chinese developers access Anthropic’s models at as low as 10% of the official price. The participants extend far beyond selective experienced AI researchers, and the motivations are much broader than building a frontier model to catch up. Everyone who wants to use more advanced AI models or tools, be they university professors and students, tech workers, individual developers, or hobbyists, uses API proxies.1 The logs they generate may have become a commodity, traded for purposes ranging from model training to targeted fraud.

Meanwhile, every layer of control frontier US AI companies have added (geoblocking, phone verification, credit card requirements, and now live biometric KYC checks) has produced a corresponding layer of evasion infrastructure. These new SMS farms and biometric harvesting operations have implications that extend beyond geopolitics into how frontier AI safety frameworks are designed.

Building on my 2025 ChinaTalk piece on accessing banned American models in China, this update zooms in on the transfer station economy specifically: how it is structured, how it monetizes, and what it reveals about the limits of access blocking and account monitoring as AI governance tools. Unlike 2025’s grey market, however, the 2026 story does not stop at the border between Chinese users and American AI model providers. The transfer station economy exposes blind spots in AI safety frameworks designed to prevent harms that extend beyond the US-China rivalry, from misuse by malicious actors to the erosion of provider traceability, while feeding into criminal markets that exploit ordinary people — many already disadvantaged — caught in the supply chain.

To illustrate how a transfer station works, let’s take Anthropic, the company with the most rigorous geo-blocking mechanism, and whose models are very popular among Chinese developers, as an example.

Geo-blocking and Know-Your-Customer (KYC)

On the map of Anthropic’s supported countries, China is conspicuously absent, and on the Chinese internet, so is Anthropic – technically speaking. In reality, neither Anthropic’s blockage nor the Great Firewall stops Chinese users from accessing Claude and Claude Code. Claude models have thrived on e-commerce apps like Taobao despite supposed platform and government censorship since 2025, and Singapore, with a population smaller than that of New York City, “surprisingly” leads global per capita use of Anthropic’s Claude in April 2026.

The Chinese government is not today especially motivated to curb Chinese developers’ access to advanced US models. Anthropic, on the other hand, is serious about it, with its multiple layers of mechanisms to block users in mainland China. At the most basic level, account registration requires phone numbers, overseas credit cards, and matching billing addresses. On September 5, 2025, Anthropic further prohibited access from any entity more than 50% owned, directly or indirectly, by companies headquartered in unsupported regions like China, regardless of where that entity operates. This closes the subsidiary loophole that had allowed Chinese-backed firms in foreign countries to retain API access.

The most recent measure arrived in April 2026. Anthropic began requiring select users to verify their identity using a government-issued photo ID and a live selfie, making Claude the first major consumer AI platform to implement this level of identity checking. The rollout is selective and triggered by specific use cases or platform integrity flags. For Chinese users accessing Claude through VPN or other intermediaries, the new KYC policy is supposed to make it considerably harder to access Claude–even if Chinese users can fake phone numbers and addresses, they will theoretically have a hard time faking live selfies matched against a physical government document.

In reality, however, Chinese people not only can access Claude and related tools, but most of the time they can purchase tokens at 10% of the original price. The magic lies in “transfer stations.”

What is a “Transfer Station (中转站)”?

A transfer station (中转站) is what the Chinese developer ecosystem calls an API proxy–an overseas server that sits between a developer and Anthropic’s infrastructure. It accepts API requests, forwards them as if they originated from the transfer station’s location, and passes the response back.2 The user redirects their software to the proxy’s server instead of Anthropic’s, and pays the API proxy RMB via WeChat or Alipay.3 This sidesteps both the VPN and the overseas credit card needed for direct access. Prominent transfer stations are catalogued in community repositories and ranked by real-time price and uptime. Below them, a longer tail of small and individual projects comes and goes.

While this setup sounds functionally identical to legitimate Western API aggregators like OpenRouter, transfer stations operate in an entirely different universe of legality and trust. Legitimate aggregators exist to simplify developer workflows, charging standard rates based on transparent enterprise agreements. Transfer stations, conversely, are built explicitly for evasion, routing data through unaccountable middlemen.

Just like providing VPN services or selling Claude on Taobao, a transfer station is technically not allowed in China. According to China’s regulations on the AI services registry, AI services provided without filing and security assessment are illegal. But just as some small businesses can skip AI registration without punishment, so do most transfer stations. However, the bigger the business, the more unsafe it is to run.

The Supply Chain of Transfer Stations

A transfer station is not a sole entity. It sits in the middle of a layered supply chain, with most participants never interacting with each other directly.

Upstream are the resource providers: account merchants who bulk-register or acquire Anthropic accounts at scale; SMS verification platforms that supply the foreign phone numbers needed to pass sign-up checks; and, at the more technical end, reverse engineers who analyze Anthropic’s client code to find authentication shortcuts or detect when detection logic has changed. The payment infrastructure with card merchants and proxy networks also enables overseas billing from inside China.

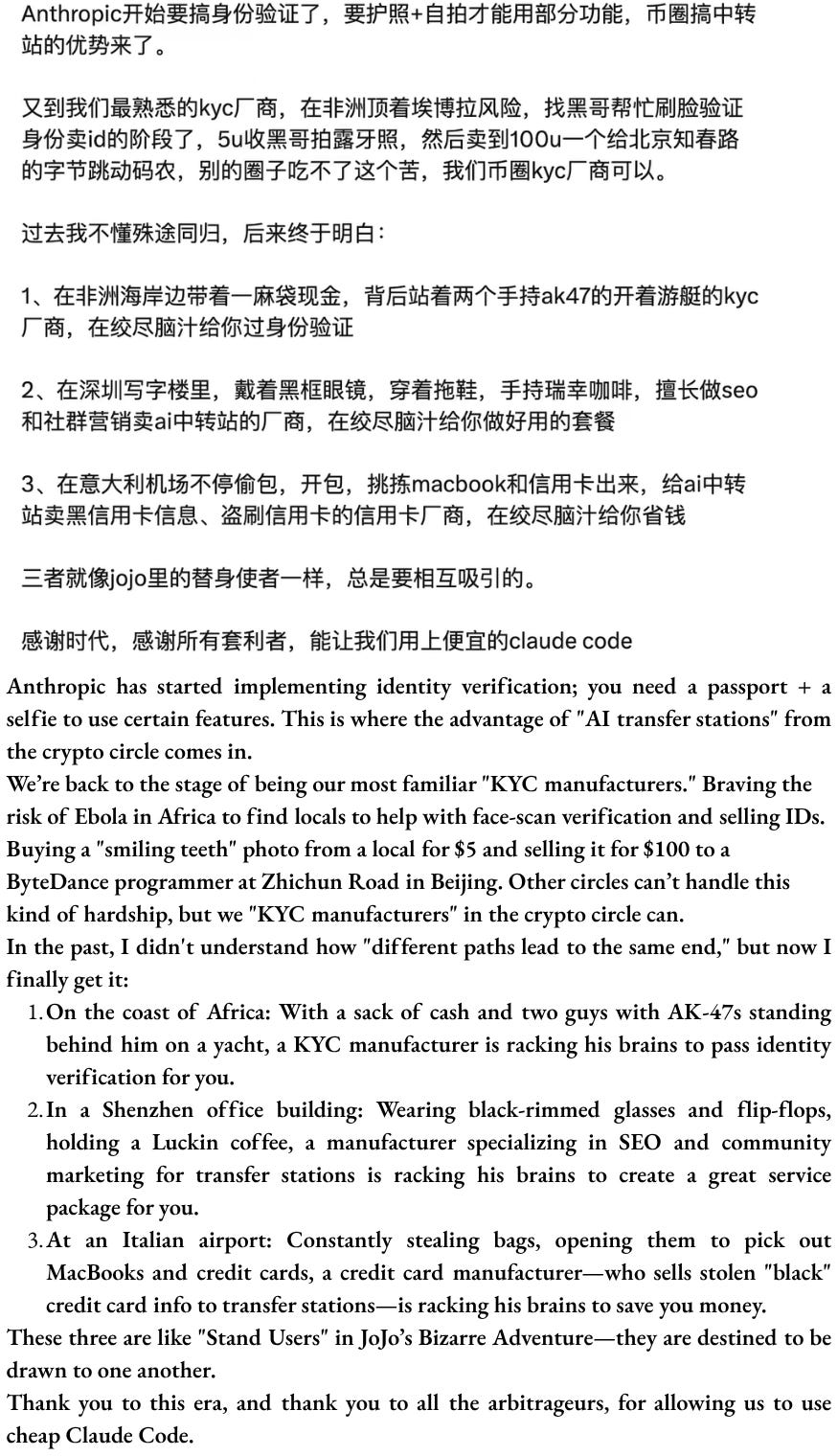

The upstream also tackles more sophisticated KYC regimes–either by AI or humans. AI services have demonstrated the ability to generate highly realistic fake IDs capable of bypassing identity verification on major platforms, and deepfake tools now allow criminals to create digital clones that successfully pass biometric verification remotely. Even if the defender can successfully detect AI faking humans, a more labour-intensive method exists to find real humans. Agents travel to lower-income countries in Africa or Latin America to recruit real individuals willing to complete in-person verification.4 The Worldcoin black market offered a documented precedent, with iris scans harvested from KYC merchants in Cambodia and Kenya, sold for under $30.

In the middle sits the transfer station itself: a software interface that receives users’ requests and forwards them to Anthropic as if they originated from a legitimate account, a payment integration (usually Alipay or WeChat), and the unglamorous operational layer that keeps it running — cycling accounts before they get flagged, balancing load across the pool, and continuously adapting to Anthropic’s abuse-detection updates.

Downstream are the customers: individual developers using Codex or Claude Code, enterprises routing internal workflows through the proxy, application builders embedding the API in their own products, and secondary resellers who buy wholesale access and repackage it for individual customers on Taobao–as I documented last year.

Almost no one operates the full chain. Most participants own one or two links and monetise those well, resulting in a resilient, modular system. AI model providers can suspend individual operators, but the upstream account pools and downstream customer base remain intact. So long as there are developers who want access to Claude and identity black markets willing to supply the credentials, which are both durable features, a replacement can be stood up quickly.

One Fish, Three Meals (一鱼三吃): How to Make Tokens Cheap

The most curious thing, however, is not how to get access to Claude or Claude Code in China, but how to get it at a ridiculously low price–usually priced at 1 RMB per $1 of tokens — 70–90% below official prices. According to public discussions, there are at least three ways a transfer station makes this possible–often described as “one fish, three meals (一鱼三吃)”.

Meal 1: The markup on access. This is possible because of the upstream resource providers who can stack proxies using at least five relatively “innocent” tactics:

bulk-registering API accounts to farm Anthropic’s $5 free credit

reselling unused quota from others’ accounts

corporate/educational discount arbitrage

“APImaxxing” — one $200 Max plan carved up among multiple users via tokens-per-hour quotas, exploiting the gap between Anthropic’s flat subscription price and the far higher cost of equivalent pay-per-token API access

Beyond these, there is a darker upstream input: accounts purchased using stolen or fraudulent credit cards which can enter the proxy pool at effectively zero cost to the operator. How large this share is relative to the above four “innocent” tactics is difficult to verify, but the two markets likely share some infrastructure and personnel.

Meal 2: Swapping models and inflating tokens. Because users’ inputs and model outputs are mediated through a proxy, users cannot verify which model their request was actually routed to. A user selects Opus 4.7, but the proxy can silently route to Sonnet, Haiku, or, in the worst case, GLM or Qwen, and fraudulently relabel the output. In a recent paper from Germany’s CISPA Helmholtz Center for Information Security (which cited my article last year on grey market!), researchers audited 17 API proxies and found widespread model swapping–API proxy access to “Gemini-2.5” achieved only 37.00% on a medical benchmark, a staggering drop from the 83.82% performance of the official API. On the user end, the tell only comes on complex tasks, when the output feels off (often referred to as 降智, or “dumbed-down”), but there is no clean way to prove it. Numerous public records highlight concerns that certain API proxies have noticeably compromised model performance. These proxies are suspected of “diluting” (掺水) services by substituting premium frontier models with inferior tiers.

Besides model swapping, overconsumption of tokens also makes the price per token cheaper, though at the expense of driving up the total cost. Some of it is structural, as proxies that rotate accounts frequently destroy cache continuity as a side effect, forcing users to burn full-price tokens on context that would otherwise be nearly free. Some of it may be deliberate as the proxy providers try to milk more usage. The line between the two is difficult to draw from the outside.

Meal 3: The logs are the product. This is perhaps the most important part as it intersects with data privacy and distillation. Every request that passes through a proxy — full prompt, full response, tool calls, iterations — is sitting on the proxy operator’s server. For AI coding agents, those logs contain long reasoning chains, real engineering decisions, repository context, and human-verified correct outputs. This makes them an ideal dataset for post-training: for supervised fine-tuning on real engineering tasks, and, where full reasoning traces are captured, for distilling Claude’s reasoning patterns into smaller models. Chinese developer communities assert this is happening in at least some cases, but whether proxy operators are systematically harvesting and selling these logs, and to whom, remains unverified. However, downstream distillation data does exist on the open web. Several datasets of Claude Opus 4.6 reasoning outputs circulate on HuggingFace with no clear source for the outputs. Theoretically, one can clean and sell similar distilled datasets to other model developers in China.

The first two meals are useful for providing cheaper tokens cheaper than Anthropic officially charges, but to really make prices ridiculously low — at 10%, or even 5%, of the original price — one needs to eat the third meal. And as a Chinese saying goes, there is no free lunch in the world (天下没有免费的午餐). Several Chinese developers have revealed that the markup business is just customer acquisition, and the log harvest is the actual margin. Users are simultaneously paying customers and unpaid data producers, selling their private data to proxy operators in exchange for a low price. Some also warn of potential promotion, fraud, and even blackmail based on leaked users’ data from the proxy. To avoid privacy risks, some Chinese developers have also constructed their own Claude Code API proxy and open-sourced the guidelines.

What Know-Your-Customer Cannot Know

AI usage is gradually shifting from chatbot to tool use. With the rise of agent and token economy, the question of using US models is no longer only about access, but extends to cost-efficiency. This is because the Chinese AI ecosystem, regardless if it is frontier labs, university research groups, individual developers, or hobbyists, is capital-scarce. Meanwhile, the data generated by users through transfer stations demonstrably enters downstream markets, used variably for model training, data brokerage, or fraud. To the extent that distillation is part of that economy, the problem extends far beyond a handful of frontier actors that the government or AI companies in the US might expect.

History teaches us that access blockage rarely stops determined users. They raise the cost of access, which in turn creates profitable markets for anyone with the expertise to lower it. The Great Firewall made VPN services a thriving cottage industry in China. KYC requirements bred an identification-faking economy, from domestic ID card resellers to biometric harvesting operations in Southeast Asia or Africa. Layered controls by frontier AI companies— geoblocking, phone verification, credit card requirements, and now live biometric checks — have produced the same effect.

The story, however, goes beyond a “Anthropic/US versus China” framing. This points to an uncomfortable truth about access control, both in terms of geopolitical boundaries and beyond. How a geo-blocked developer walks around the controls is, structurally, the same methods plausibly employed by a terrorist to access a frontier AI model and make destructive bioweapons without being tracked. The access problem is both a unique geopolitical consideration and a shared safety concern.

Today, AI safety research treats system-level access control — in particular, detecting, monitoring, and account suspension for publicly accessible closed-weight models — as an important safeguard. In monitoring, developers control inference infrastructure, including flagging harmful inputs and outputs in real time. Detecting such as KYC requirements assumes that the provider can attribute behaviour to identifiable actors, and account suspension similarly assumes that suspending an account meaningfully denies access. However, US model providers do not control inference for Chinese users routing through a transfer station — the proxy operator does. When a harmful request arrives, rather than seeing the IP of the real user, AI model providers see that of the proxy. And when an account is banned, the upstream supply chain can easily set up a new proxy within hours.

The problem compounds for more sophisticated monitoring tools. Anthropic’s Clio system, designed partly to detect coordinated misuse that is invisible at the individual conversation level, works by identifying patterns across accounts and conversations. It identified, for example, a network of automated accounts using similar prompt structures to generate search engine spam and subsequently banned them. But because requests route through proxies, bans do not meaningfully stop the underlying behaviour. And for deliberately staged attacks — such as distributing a harmful inquiry across multiple stages and proxy accounts, each request individually innocuous — cross-account patterns are far less visible than coordinated spam, where the signal is obvious by design.

Lastly, the transfer station does not only embody a traditional offence/defence paradigm — whether between US AI companies and Chinese users or between AI safeguards and malicious actors. A black market has a supply chain with its own exploitative logic, and the harms it generates extend well beyond the original question of access. Faces harvested for proxy KYC verification to bypass Anthropic’s system today can be resold to open fraudulent financial accounts, fabricate employment records, or generate deepfakes tomorrow, with the original subject in the Global South bearing the legal and reputational consequences. The same infrastructure that routes Claude requests can be used to defraud users through model substitution, targeted scams based on leaked prompt data, or blackmail. The account-farming operations that keep proxy pools stocked — bulk SMS verification, fraudulent registrations, carded accounts — nurture broader criminal markets for spam calls, phishing texts, fraudulent loan applications, and credit card scams. Many harms have nothing to do with AI or geopolitics.

But now that every byproduct of the grey market–from the potential danger of terrorists leveraging AI to synthesize the next pandemic to real-life exploitation and crime. As much as the Great Firewall or AI geo-blockage wants to separate who gets access to frontier technology along national lines, as the grey market reveals, the harms are not separable.

Acknowledgement:

Zilan is grateful to Alan Chan, Gabriel Wagner, Karuna Nandkumar, and Kayla Blomquist for their helpful feedback.

The author acknowledges the use of LLMs for preliminary desk research, technical concepts clarification and copy-editing, and is, in fact, very grateful that she can still use VPN to access Claude in mainland China via the Singapore node without triggering the KYC process.

ChinaTalk is a reader-supported publication. To receive new posts and support our work, consider becoming a free or paid subscriber.

Profiles derived from informal conversations.

An Application Programming Interface, or API, is the channel that lets developers plug their software directly into an AI model — sending requests programmatically to Anthropic's servers and receiving responses back, rather than interacting through a browser.

Specifically, replacing the ANTHROPIC_BASE_URL environment variable with the proxy's address.

From informal conversations and desk research.