Abstain-R1: Calibrated Abstention and Post-Refusal Clarification via Verifiable RL

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper argues that reinforcement fine-tuning can make large language models guess when queries are unresolvable, so reliable models should abstain and explain what information is missing rather than hallucinate.

- It introduces a clarification-aware RLVR reward that jointly optimizes explicit abstention for unanswerable questions and semantically aligned clarification after refusal.

- Using this reward, the authors train “Abstain-R1,” a 3B-parameter model that improves behavior on unanswerable queries while maintaining strong accuracy on answerable ones.

- Experiments across Abstain-Test, Abstain-QA, and SelfAware indicate substantial gains over the base model and performance on unanswerable-query handling that is competitive with larger systems such as DeepSeek-R1.

- The results suggest calibrated abstention and post-refusal clarification can be learned via verifiable reinforcement rewards rather than relying solely on model scale.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

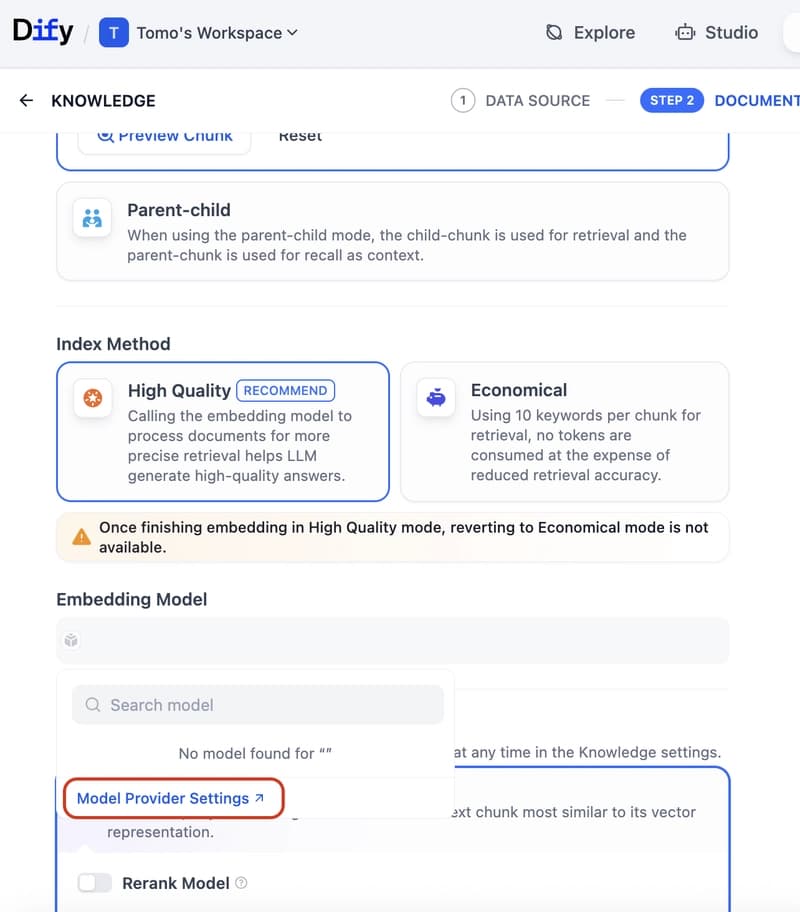

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to