I recently contributed an experimental HFQ4-G256 MMQ prefill path to hipfire, an RDNA-focused LLM inference engine.

Disclaimer: I authored the PR, so this is partly a contribution note, but I am mainly looking for independent validation from other AMD users.

Before this PR, HFQ4 prefill in hipfire was going through a more generic/slower path. On my Strix Halo system, prompt processing was clearly the bottleneck: longer prefills were around ~310–340 tok/s.

The new path adds an opt-in MMQ-style prefill implementation. In this context, MMQ means a specialized quantized matrix-multiplication path: instead of treating prefill like a less optimized sequence of operations, it packs the work into tiled matrix-matrix kernels that are better suited for GPU execution. The implementation pre-quantizes prefill activations into a Q8_1 MMQ layout and uses i8 WMMA over 128×128 output/batch tiles with LDS staging.

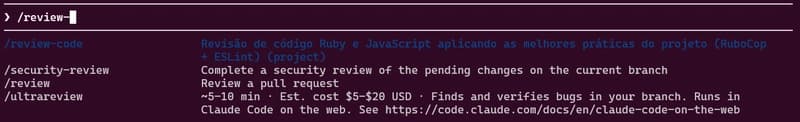

After enabling it with:

HIPFIRE_MMQ=1

I see longer-prefill throughput around ~1140–1260 tok/s on Strix Halo / gfx1151.

What changed:

- Adds an opt-in

HIPFIRE_MMQ=1path for HFQ4-G256 prefill. - Targets RDNA3 / RDNA3.5 for now:

gfx1100,gfx1101,gfx1102,gfx1103,gfx1150,gfx1151. - Pre-quantizes prefill activations into a Q8_1 MMQ layout.

- Uses i8 WMMA over 128×128 output/batch tiles with LDS staging.

- Similar in shape to llama.cpp’s AMD MMQ prompt-processing path.

- Not enabled by default.

Benchmark: Qwen3.5 9B HFQ4/MQ4 on Strix Halo / gfx1151

| KV mode | pp | MMQ off, tok/s | MMQ on, tok/s | Speedup |

|---|---|---|---|---|

| q8 | 256 | 363.1 | 1127.6 | 3.11x |

| q8 | 512 | 352.0 | 1179.8 | 3.35x |

| q8 | 1024 | 328.9 | 1222.7 | 3.72x |

| q8 | 2048 | 318.2 | 1168.5 | 3.67x |

| asym4 | 256 | 368.6 | 1108.8 | 3.01x |

| asym4 | 512 | 360.7 | 1173.3 | 3.25x |

| asym4 | 1024 | 333.9 | 1223.0 | 3.66x |

| asym4 | 2048 | 312.3 | 1151.7 | 3.69x |

| asym3 | 256 | 361.4 | 1124.5 | 3.11x |

| asym3 | 512 | 359.8 | 1187.3 | 3.30x |

| asym3 | 1024 | 329.9 | 1259.1 | 3.82x |

| asym3 | 2048 | 314.1 | 1216.5 | 3.87x |

| asym2 | 256 | 374.0 | 1116.2 | 2.98x |

| asym2 | 512 | 356.6 | 1173.2 | 3.29x |

| asym2 | 1024 | 340.1 | 1208.5 | 3.55x |

| asym2 | 2048 | 311.4 | 1142.9 | 3.67x |

So on longer prefills, this moved my Strix Halo results from roughly ~311–340 tok/s to ~1143–1259 tok/s.

Correctness validation so far:

- batched prefill compared against sequential token-by-token forward pass

- final prefill top token match

- selected-logit drift within tolerance

- next decode step after prefill also checked, to catch KV-cache write problems

- tested across

q8,asym4,asym3,asym2KV modes

Caveats:

- validated by me mainly on one Strix Halo /

gfx1151system - the path is experimental

- it is not enabled by default

- I would not call this the final/canonical MMQ implementation yet

- more coherence and long-context testing would be useful

The maintainer also tested the merged path on gfx1100 and reported that HIPFIRE_MMQ=1 runs cleanly there, with a smaller but still positive result: +19.8% on 4B pp256.

What I would especially like to check now is whether this implementation generalizes well across other AMD GPUs and APUs, or whether the current tuning is mostly favorable to Strix Halo / gfx1151.

The basic correctness checks pass, but I am not yet fully confident that the KV-cache behavior is completely bulletproof. Subtle KV-cache issues might only appear in longer real workloads, so I would especially appreciate validation on long-context and multi-turn runs.

I would be very interested in results from people with:

- 7900 XTX /

gfx1100 - other RDNA3 cards

- Strix Halo /

gfx1151 - RDNA3.5 APUs

- and more

- long-context agentic workloads where prefill matters more than short chat decode

[link] [comments]