Learn&Drop: Fast Learning of CNNs based on Layer Dropping

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces a training-time method for deep CNNs that scores each layer by how much its parameters are changing to decide whether the layer should keep learning.

- Based on these scores, the network is dynamically scaled down so fewer parameters/operations are processed during training, accelerating forward propagation.

- Unlike prior work that mainly targets inference-time compression or limits backpropagation costs, this approach specifically reduces forward-propagation computation during training.

- Experiments on VGG and ResNet (tested with MNIST, CIFAR-10, and Imagenette) show training time can be reduced by more than half with little impact on accuracy, alongside sizable forward-FLOPs reductions.

- The method is positioned as particularly beneficial for scenarios requiring fine-tuning or online training of convolutional models, such as when data arrive sequentially.

Related Articles

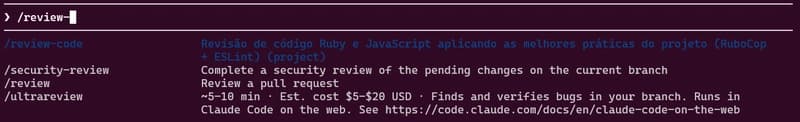

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to