Adaptive Test-Time Compute Allocation with Evolving In-Context Demonstrations

arXiv cs.AI / 4/25/2026

📰 NewsModels & Research

Key Points

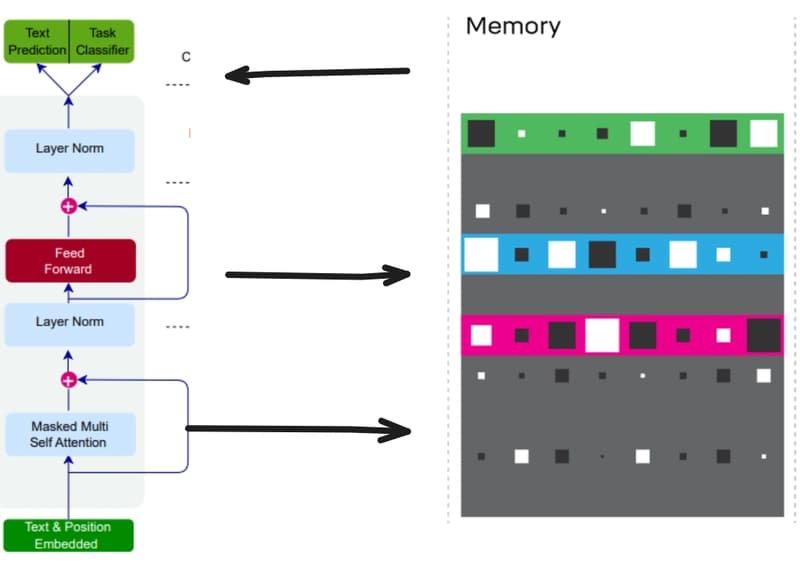

- The paper proposes a framework that adaptively allocates test-time compute while simultaneously adjusting how the model generates outputs.

- It uses a warm-up step to find easy queries and build an initial set of question–response pairs drawn from the test set itself.

- In the adaptive phase, additional compute is focused on unresolved queries and their generation distributions are reshaped via evolving in-context demonstrations.

- The evolving demonstrations condition each generation on previously successful responses from semantically related queries, avoiding repeated sampling from a fixed distribution.

- Experiments on math, coding, and reasoning benchmarks show consistent improvements over baselines while using substantially less inference-time compute.

Related Articles

Underwhelming or underrated? DeepSeek V4 shows “impressive” gains

SCMP Tech

Debugging AI Agents in Production: ADK+Gemini Cloud Assist | Google Cloud NEXT '26

Dev.to

🤖 Learn Harness Engineering by Building a Mini Openclaw 🦞

Dev.to

Teaching Small Language Models to Remember: Giving LLMs a Notebook with Differentiable Neural Computers

Dev.to

![Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]](/_next/image?url=https%3A%2F%2Fpreview.redd.it%2Fzynqkm0osaxg1.png%3Fwidth%3D140%26height%3D76%26auto%3Dwebp%26s%3De827ef782e46b56a11f263b7689811da72904ba9&w=3840&q=75)

Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]

Reddit r/MachineLearning