A Principled Approach for Creating High-fidelity Synthetic Demonstrations for Imitation Learning

arXiv cs.RO / 5/5/2026

📰 NewsDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes a principled synthetic-demonstration method for imitation learning that preserves the expert trajectory rather than generating new motion with sampling planners or trajectory optimization that can drift away from the demonstrated path.

- It treats the expert trajectory as a strong prior by modeling it with Dynamic Movement Primitives (DMPs) and retargeting the motion to new goals, object configurations, and viewpoints while maintaining phase consistency and shape structure within a reconstructed 3DGS scene.

- To handle clutter and collisions safely, the authors introduce an analytic obstacle-aware DMP formulation that directly leverages the continuous density field produced by 3D Gaussian Splatting, enabling collision avoidance with minimal perturbation of the nominal expert motion.

- Experiments on a Spot mobile manipulator across three tasks with increasing sensitivity show that the proposed approach reduces trajectory deviation and collision rates and improves task success, particularly for diffusion-based visuomotor policy training.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Stop Burning Cash: How to Compress LLM Prompts by 60% in Real-Time | 0507-0255

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

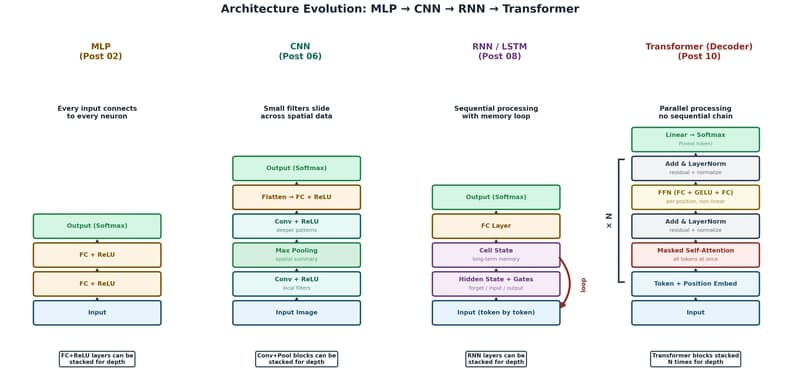

The Transformer: The Architecture Behind Modern AI

Dev.to