Prompt Engineering for AI Agents: 7 Production Patterns That Beat “Better Prompts”

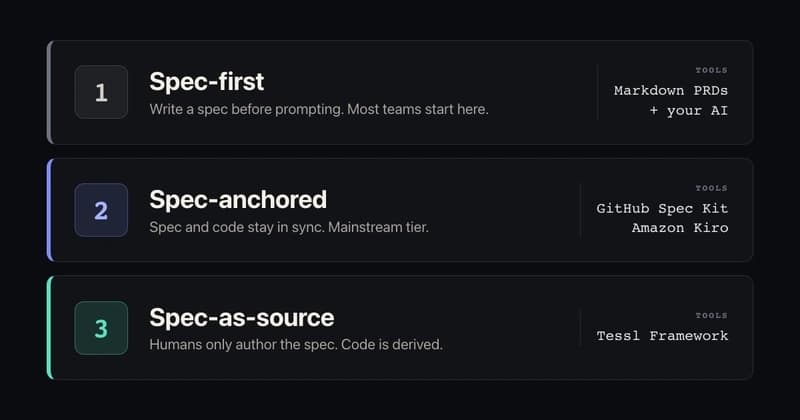

Most prompt engineering advice is still written for one-off ChatGPT conversations.

That is useful, but it misses where developers are spending more time now: AI agents, coding assistants, automation workflows, and LLM-powered product features.

In those systems, the winning prompt is not usually the longest prompt. It is the prompt that makes the model easier to control, test, debug, and reuse.

I checked recent DEV topics around #ai, #productivity, and #promptengineering, and a clear pattern stood out: developers are talking less about magic wording and more about agent architecture, token costs, control flow, prompt quality, and production reliability.

So here is the practical version: seven prompt patterns I would use when moving from “cool demo” to “repeatable AI workflow.”

1. The Role + Boundary Pattern

Bad agent prompts often give the model a role but no boundary.

You are a senior developer. Build the feature.

That sounds strong, but it gives the model too much room to invent context, skip steps, or over-engineer.

A better production prompt defines both identity and limits:

You are a senior backend engineer working inside an existing codebase.

Your job:

- Propose the smallest safe implementation plan.

- Do not rewrite unrelated modules.

- Do not add new dependencies unless necessary.

- Ask for missing context before making assumptions.

Output:

1. Files likely to change

2. Step-by-step plan

3. Risks

4. Tests to run

The role gives direction. The boundary prevents chaos.

Use this when: building coding agents, ticket triage bots, refactoring assistants, or internal workflow copilots.

2. The Context Budget Pattern

Developers often paste everything into a prompt and hope the model “figures it out.”

That works until your agent becomes slow, expensive, and inconsistent.

Instead, separate context into three layers:

Always include:

- User goal

- Current task

- Relevant constraints

Include only when needed:

- Recent conversation history

- Related files

- Previous decisions

Do not include:

- Unrelated logs

- Full documentation dumps

- Old chat history unless requested

The point is not to starve the model. The point is to make context intentional.

A practical prompt might say:

Use only the context provided below.

If the context is insufficient, say what is missing.

Do not infer undocumented requirements.

That one sentence can prevent a surprising amount of hallucinated architecture.

Use this when: building agents with retrieval, long-running conversations, or codebase context windows.

3. The Decision Log Pattern

Agents are hard to debug when they only output the final answer.

A lightweight decision log makes the reasoning reviewable without asking for a giant chain-of-thought dump.

Example:

Before the final answer, provide a short decision log:

- Key facts used

- Assumptions made

- Options considered

- Why the recommended option was chosen

For a coding assistant, this can become:

Decision log:

1. Which files influenced your answer?

2. What assumption are you making?

3. What is the smallest safe change?

4. What test would catch failure?

This improves trust because the developer can inspect the agent’s basis for action.

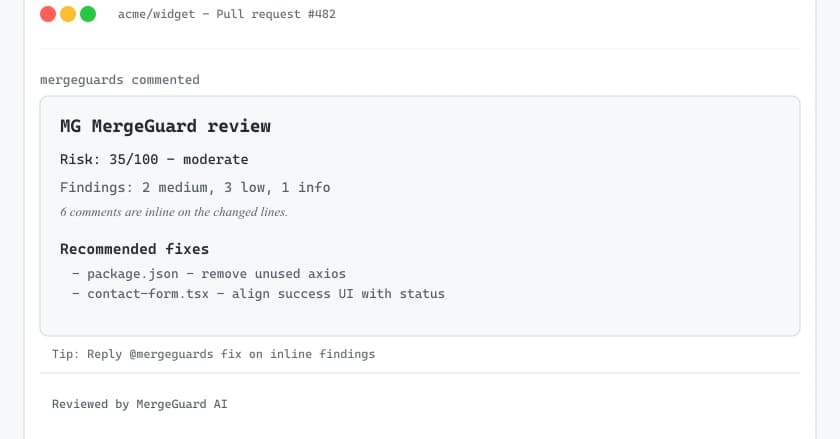

Use this when: reviewing pull requests, generating implementation plans, triaging bugs, or creating automation decisions.

4. The Output Contract Pattern

If another system consumes your agent output, vague formatting is a bug.

Compare this:

Give me the result as JSON.

With this:

Return valid JSON only. No markdown.

Schema:

{

"summary": "string",

"risk_level": "low | medium | high",

"missing_info": ["string"],

"next_action": "string"

}

If you cannot answer, return:

{

"summary": "insufficient context",

"risk_level": "high",

"missing_info": ["specific missing item"],

"next_action": "ask_for_context"

}

The second prompt is easier to parse, test, and monitor.

Use this when: your agent connects to APIs, ticketing systems, CRM tools, CI workflows, or no-code automation platforms.

5. The Escalation Pattern

Many AI agents fail because they are too eager to answer.

Production agents need permission to stop.

Add an escalation rule:

Escalate instead of answering when:

- The request involves legal, financial, medical, or security risk.

- Required context is missing.

- The action could modify production data.

- The confidence is low.

- The user asks for something outside the allowed scope.

For developer workflows:

Do not edit production configuration.

Do not rotate secrets.

Do not delete data.

If a requested change could break deployment, propose a plan and ask for confirmation.

This is not just safety theater. It creates predictable behavior.

Use this when: agents can trigger tools, write code, send messages, update records, or make recommendations that affect real users.

6. The Eval Case Pattern

A prompt is not production-ready until you have examples it should pass.

You do not need a huge benchmark to start. Create 10-20 representative cases.

Example:

Test case: Bug triage

Input: "Users on Safari cannot submit the checkout form after the latest deploy."

Expected output:

- Category: bug

- Priority: high

- Suggested owner: frontend

- Missing info: browser version, console errors, affected route

- Do not suggest backend database migration

Then test prompt changes against the same cases.

Track:

- Did the output format stay valid?

- Did the agent ask for missing context?

- Did it avoid unsafe actions?

- Did token usage increase?

- Did the answer become more useful?

This turns prompt engineering from guessing into iteration.

Use this when: you are improving a prompt over time or letting multiple people edit agent behavior.

7. The Versioned Prompt Pattern

The worst production prompt is the one nobody can roll back.

Keep prompts in versioned files:

/prompts

code_review_agent_v1.md

code_review_agent_v2.md

support_triage_agent_v1.md

support_triage_agent_v2.md

Each version should include:

Prompt name:

Version:

Owner:

Last updated:

Changed because:

Known risks:

Eval cases passed:

This seems boring until something breaks.

Then boring becomes valuable.

A versioned prompt lets you answer:

- What changed?

- Why did it change?

- Which evals passed?

- Which production issue started after this update?

- Can we roll back safely?

Use this when: prompts are part of a product, internal tool, automation workflow, or customer-facing AI feature.

A simple production prompt template

Here is a combined template you can adapt:

You are [role] working inside [system/context].

Goal:

[Specific task]

Boundaries:

- [What the agent must not do]

- [When to ask for confirmation]

- [When to escalate]

Context rules:

- Use only the provided context.

- If context is missing, list what is missing.

- Do not invent requirements.

Decision log:

- Key facts used

- Assumptions made

- Recommended path

- Main risk

Output format:

[Exact structure or JSON schema]

Quality bar:

- Prefer the smallest safe change.

- Mention tradeoffs.

- Include tests or validation steps.

This is not fancy. That is the point.

The best production prompts are often boring, explicit, and easy to evaluate.

Final thought

“Better prompts” are useful.

But production AI systems need more than clever phrasing.

They need:

- boundaries

- context control

- output contracts

- escalation rules

- eval cases

- versioning

- rollback paths

That is the difference between a prompt that works once and a prompt that can survive inside a real workflow.

If you are building AI agents, do not only ask:

How do I make the model smarter?

Ask:

How do I make the model easier to control when it is wrong?

That question leads to much better systems.

If you want a structured library of developer-focused prompts, workflows, and reusable templates, I built the Developer Prompt Bible for exactly this kind of practical AI work.

👉 Developer Prompt Bible — $9

https://payhip.com/b/ADsQI

It is designed for developers who want repeatable prompt systems for coding, debugging, planning, reviewing, and shipping faster with AI.