Most agents I've seen in the wild make the same mistake: they pick one model and route every task through it. If they picked a cheap one, the agent fails on hard tasks. If they picked a flagship, they're burning money on every "what's the weather" reply.

Mixing fast and deep models in one agent is the single highest-leverage move you can make on cost. Done right, it cuts your AI bill 70–90% with no perceptible quality drop.

The math

Take a typical production agent doing 50,000 tasks/month at ~1,000 tokens per task. That's 50M tokens.

If everything runs on Claude Opus 4.7 ($15 input / $75 output per million):

- ~$500/month, conservative

If you split the workload — 70% on Gemini 3 Flash ($0.30/$0.50), 20% on Claude Sonnet 4.6 ($3/$15), 10% on Opus 4.7:

- Fast tier: ~$15/month

- Mid tier: ~$60/month

- Flagship tier: ~$50/month

- Total: ~$125/month

Same agent, same outputs from a user's perspective, 75% lower cost. More on cutting AI agent costs →

What "fast" and "deep" actually mean

These aren't model categories — they're modes the same agent can call into.

Fast mode = high-throughput, low-latency, low-cost. The agent uses it when:

- The user is waiting (chat replies, voice)

- The task is well-defined (classification, formatting, "extract the date from this email")

- The agent is doing internal bookkeeping (deciding which tool to call, summarizing prior context)

In 2026 the fast tier is: Gemini 3 Flash (250+ t/s), GPT-5.4 mini xhigh (151 t/s, $1.69/M), Qwen3.6 Plus ($1.13/M, 53 t/s), Grok 4.20 (168 t/s).

Deep mode = the agent stops to think hard. Used when:

- The task involves multi-step reasoning (debugging, planning, compliance review)

- The output goes to the user as the final answer, not an intermediate step

- The blast radius of getting it wrong is high (sending an email, executing a trade, deploying code)

Deep tier in 2026: Claude Opus 4.7, GPT-5.4 xhigh, Gemini 3.1 Pro Preview. Sonnet 4.6 is the "almost-flagship" middle option many teams default to.

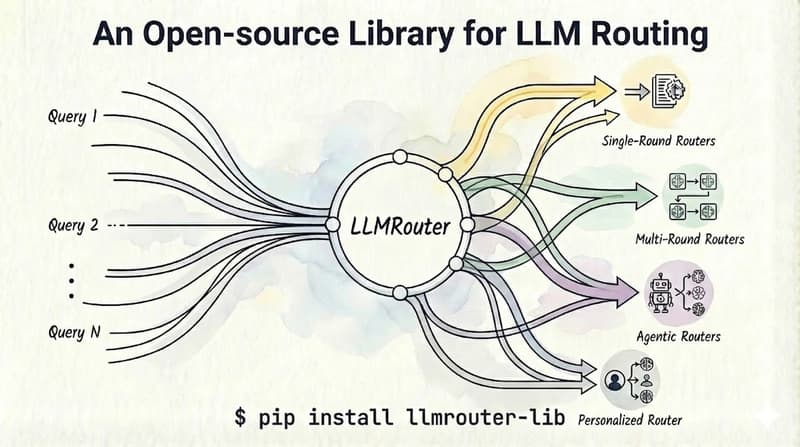

Routing patterns that work

Pattern 1: Confidence-based escalation. Run the task on the fast model. Have it self-rate confidence. If below threshold, re-run on the deep model. Works well for classification, extraction, summarization. Adds 1 round-trip on uncertain cases but avoids most flagship calls.

Pattern 2: Task-class routing. Hard-code the routing per task type. Calendar parsing → fast. Legal review → deep. Customer-support draft → fast for first pass, deep if customer escalates. Easiest to reason about and debug.

Pattern 3: Two-stage agent. Fast model plans the work and decides which tools to call. Deep model executes the steps that actually require thinking. Most production agents end up here. The "Fast / Deep" toggle in Klaws is exactly this — Fast mode runs the agent loop on Gemini 3 Flash for snappy chat; Deep mode swaps in Qwen 3.6 Plus and Claude Opus when the question deserves real thought.

Pattern 4: User-controlled. Ship a "Deep" button or /think harder command. Default to fast; let the user opt in to deep when they need it. Surprising how often users self-select correctly.

What goes wrong

The mistakes I see most often:

Routing on prompt length. "Long prompt → big model" is a bad heuristic. A 50-token "what's the airspeed velocity of an unladen swallow" needs Opus. A 50,000-token "summarize this transcript" can run on Flash.

Skipping the eval. You can't tell which tier a task belongs to without running it through both models and comparing. The intuition "this is hard" is unreliable. I've watched teams burn 10x on a task class their cheapest model handles fine.

No fallbacks. Fast models hit rate limits. Flagships have outages. If your agent has only one model wired in, an Anthropic incident kills your product. Always have a fallback model in a different tier.

Treating cost as the only axis. Sometimes the deep model is the only one that doesn't refuse on edge cases (security research, medical, legal). Sometimes the fast model is the only one with the right tool-call schema. Cost is one constraint among several.

The implementation reality

Doing this yourself means: vendor accounts at 3–5 providers, per-task routing logic, eval harnesses to validate routing decisions, retry/fallback on rate limits, billing reconciliation across vendors, and tracking which model handled which task for debugging. It's a sub-team's worth of work.

The shortcut: use a platform that does the routing. Klaws routes simple chat to Gemini 3 Flash, complex reasoning to Qwen 3.6 Plus or Claude Opus 4.7, code to GPT-5.3 Codex, long documents to Gemini 3.1 Pro — and you pay flat credits instead of juggling APIs. Same routing playbook, none of the plumbing.

For deeper reads: the full 2026 model leaderboard, how to choose your model, and how to switch models without rebuilding your agent.