DGHMesh: A Large-scale Dual-radar mmWave Dataset and Generalization-focused Benchmark for Human Mesh Reconstruction

arXiv cs.CV / 4/28/2026

📰 NewsSignals & Early TrendsTools & Practical UsageModels & Research

Key Points

- The paper introduces DGHMesh, a large-scale dual-mmWave radar dataset plus a benchmark specifically designed to evaluate human mesh reconstruction (HMR) under configuration shifts for better generalization testing.

- DGHMesh includes synchronized data from FMCW radar, SFCW radar, RGB images, and high-precision 3D HMR annotations, totaling 360,000 frames from 15 subjects performing 8 actions.

- The benchmark provides synchronized raw I/Q radar data and accurately calibrated radar spatial positions, enabling fair comparisons across different measurement setups and algorithm variants.

- It also proposes mmPTM, a query-based multi-radar fusion framework that combines point clouds and imaging tubes, and reports strong accuracy and competitive generalization across multiple sub-benchmarks.

- DGHMesh and the associated code will be publicly available via GitHub, with the full benchmark and code to be released after the paper publication.

Related Articles

Black Hat USA

AI Business

Write a 1,200-word blog post: "What is Generative Engine Optimization (GEO) and why SEO teams need it now"

Dev.to

Remove Background from Image Free (No Signup): The Practical Guide

Dev.to

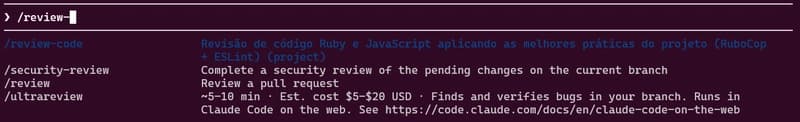

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Indian Developers: How to Build AI Side Income with $0 Capital in 2026

Dev.to