Turning the TIDE: Cross-Architecture Distillation for Diffusion Large Language Models

arXiv cs.CL / 4/30/2026

📰 NewsModels & Research

Key Points

- The paper introduces TIDE, a new framework for cross-architecture distillation of diffusion LLMs, addressing the gap left by prior methods that only distill within the same architecture.

- TIDE uses three modular techniques—TIDAL to adapt distillation strength by both training progress and diffusion timestep, CompDemo to improve teacher predictions under heavy masking, and Reverse CALM to handle cross-tokenizer learning with stable, bounded gradients.

- Experiments distill large teachers (8B dense and 16B MoE) into a small 0.6B student using heterogeneous pipelines, achieving an average +1.53 points improvement over the baseline across eight benchmarks.

- The strongest gains appear in code generation, where HumanEval reaches 48.78 versus 32.3 for the AR baseline.

- The work suggests cross-architecture teacher-student transfer can retain high performance in diffusion LLMs while greatly reducing model size and inference cost.

Related Articles

We Built a DNS-Based Discovery Protocol for AI Agents — Here's How It Works

Dev.to

Building AI Evaluation Pipelines: Automating LLM Testing from Dataset to CI/CD

Dev.to

Function Calling Harness 2: CoT Compliance from 9.91% to 100%

Dev.to

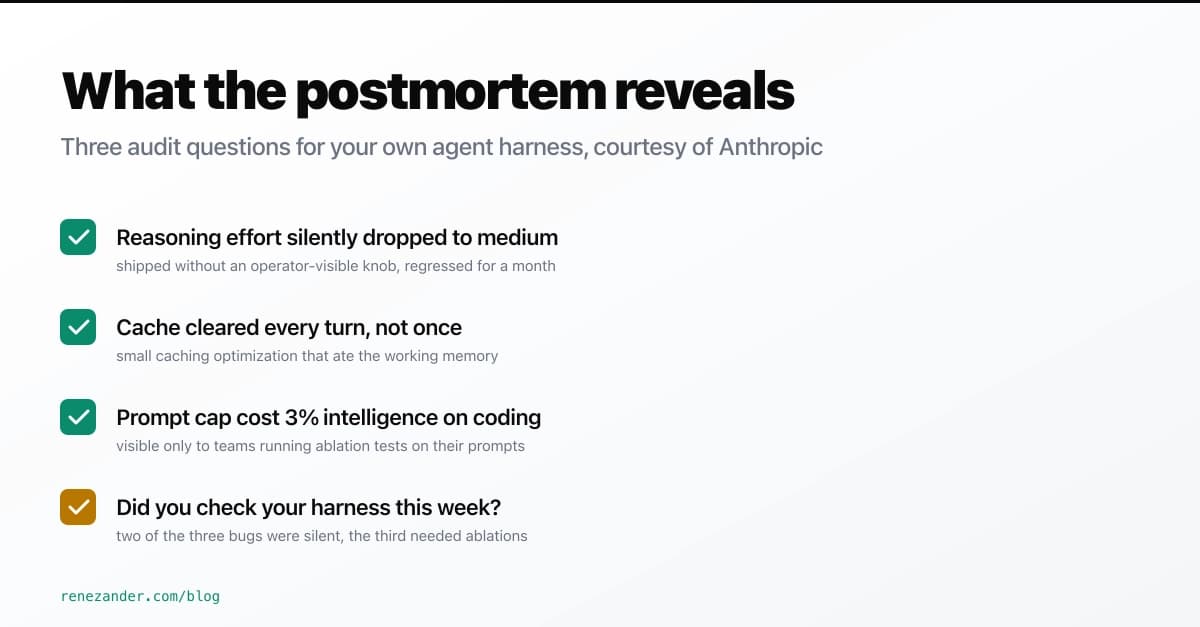

What Anthropic's April 23 Postmortem Reveals About Your Agent Harness

Dev.to

Fine-tuning YOLOv11 to detect stamps and signatures on banking documents - a practical walkthrough

Dev.to