Multi-Branch Non-Homogeneous Image Dehazing via Concentration Partitioning and Image Fusion

arXiv cs.CV / 5/5/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper addresses a key weakness of existing single-image dehazing methods: they often fail on non-homogeneous hazy images with spatially varying haze density and abrupt transitions.

- It proposes CPIFNet, a multi-branch deep neural network that decomposes a non-homogeneous dehazing task into multiple approximately homogeneous sub-tasks by treating the hazy image as a composite of local regions.

- CPIFNet uses a two-stage design: an Image Enhancement Network (IENet) stage with multiple branches trained on homogeneous haze at different concentration levels, followed by an Image Fusion Network (IFNet) stage that merges the best restored regions.

- The approach is trained with a combined loss function that includes reconstruction, perceptual, structural, and color losses to jointly supervise both stages for improved visual quality.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Stop Burning Cash: How to Compress LLM Prompts by 60% in Real-Time | 0507-0255

Dev.to

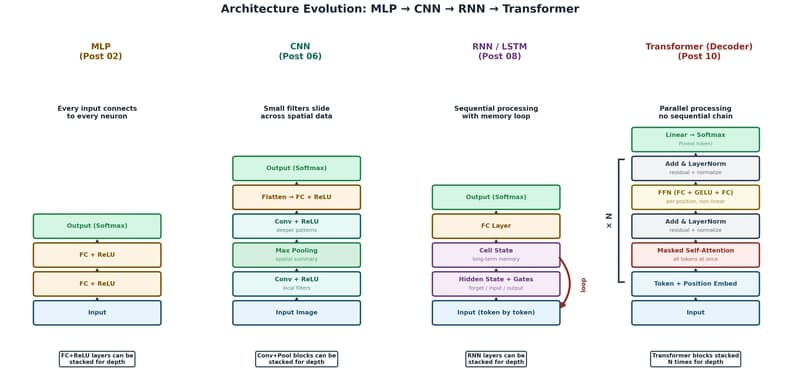

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to