Lexical Tone is Hard to Quantize: Probing Discrete Speech Units in Mandarin and Yor\`ub\'a

arXiv cs.CL / 4/10/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper studies discrete speech units (DSUs) created by quantizing self-supervised learning (SSL) representations and finds that they encode suprasegmental features (like prosody) less reliably than segmental/phonetic structure.

- Experiments on Mandarin and Yoruba suggest SSL latent spaces do contain tone information, but common DSU quantization methods (including K-means and alternatives) tend to prioritize phonetic structure, weakening lexical tone representation.

- The authors conclude that current DSU quantization strategies have systematic limitations for suprasegmental features, implying broader issues for other prosody-related attributes.

- They propose a potential improvement: cluster once to capture phonetic information and then cluster again on the residual representation to better encode lexical tone.

Related Articles

Black Hat Asia

AI Business

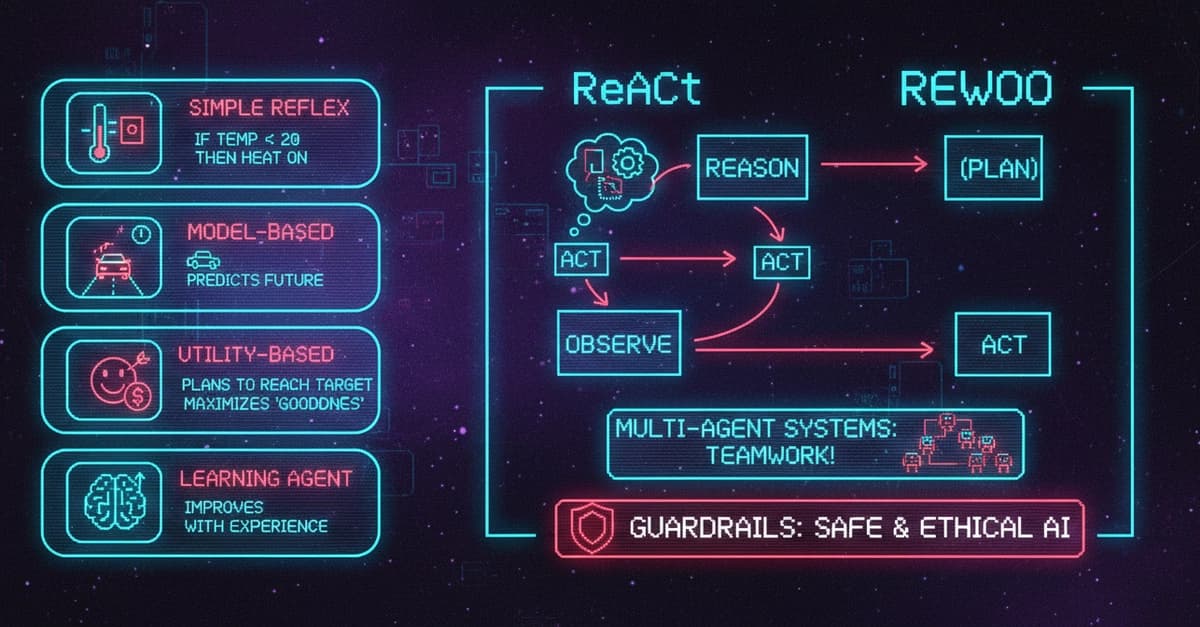

AI Agents Explained: 5 Types, Components, Frameworks, and Real-World Use Cases

Dev.to

Edge-to-Cloud Swarm Coordination for circular manufacturing supply chains with embodied agent feedback loops

Dev.to

Why QIS Is Not a Sync Problem: The Mailbox Model for Distributed Intelligence

Dev.to

The Ethics of AI: A Developer's Responsibility

Dev.to