| submitted by /u/Total-Resort-3120 [link] [comments] |

ParoQuant: Pairwise Rotation Quantization for Efficient Reasoning LLM Inference

Reddit r/LocalLLaMA / 5/7/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- ParoQuant introduces a pairwise rotation quantization approach aimed at more efficient inference for reasoning-focused LLMs.

- The project provides public resources including a dedicated website, a GitHub repository, and Hugging Face collections to support adoption and experimentation.

- By targeting quantization and rotation components, the method focuses on reducing computation/memory costs while maintaining reasoning performance.

- The release is positioned as a practical optimization for running local or resource-constrained LLM setups with improved efficiency.

Related Articles

Black Hat USA

AI Business

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Stop Burning Cash: How to Compress LLM Prompts by 60% in Real-Time | 0507-0255

Dev.to

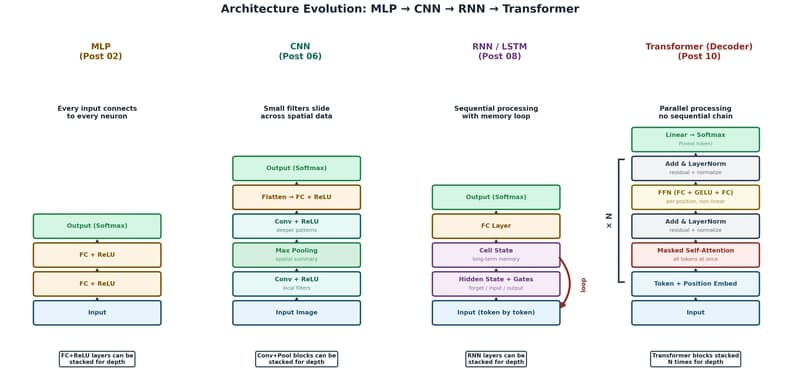

The Transformer: The Architecture Behind Modern AI

Dev.to