20th April 2026 - Link Blog

Claude Token Counter, now with model comparisons. I upgraded my Claude Token Counter tool to add the ability to run the same count against different models in order to compare them.

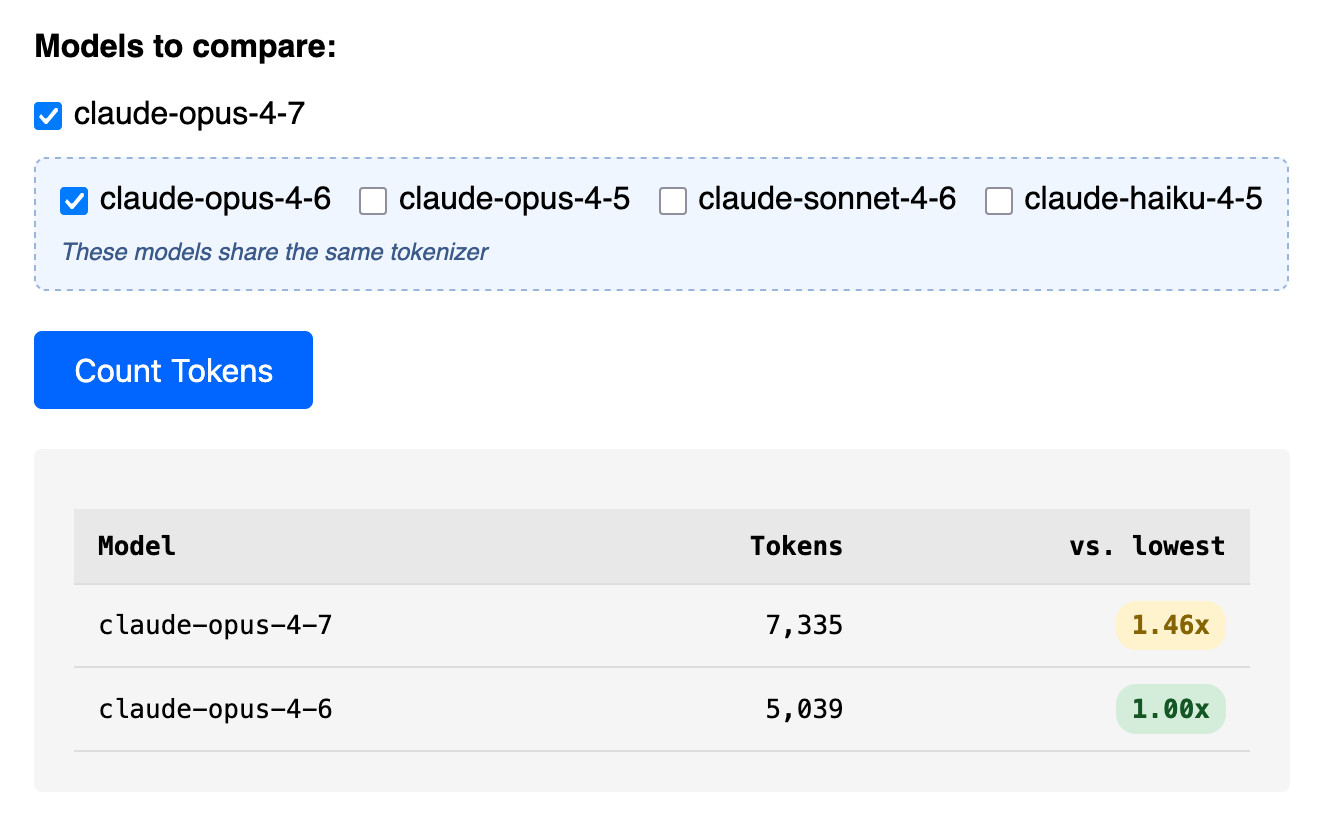

As far as I can tell Claude Opus 4.7 is the first model to change the tokenizer, so it's only worth running comparisons between 4.7 and 4.6. The Claude token counting API accepts any Claude model ID though so I've included options for all four of the notable current models (Opus 4.7 and 4.6, Sonnet 4.6, and Haiku 4.5).

In the Opus 4.7 announcement Anthropic said:

Opus 4.7 uses an updated tokenizer that improves how the model processes text. The tradeoff is that the same input can map to more tokens—roughly 1.0–1.35× depending on the content type.

I pasted the Opus 4.7 system prompt into the token counting tool and found that the Opus 4.7 tokenizer used 1.46x the number of tokens as Opus 4.6.

Opus 4.7 uses the same pricing is Opus 4.6 - $5 per million input tokens and $25 per million output tokens - but this token inflation means we can expect it to be around 40% more expensive.

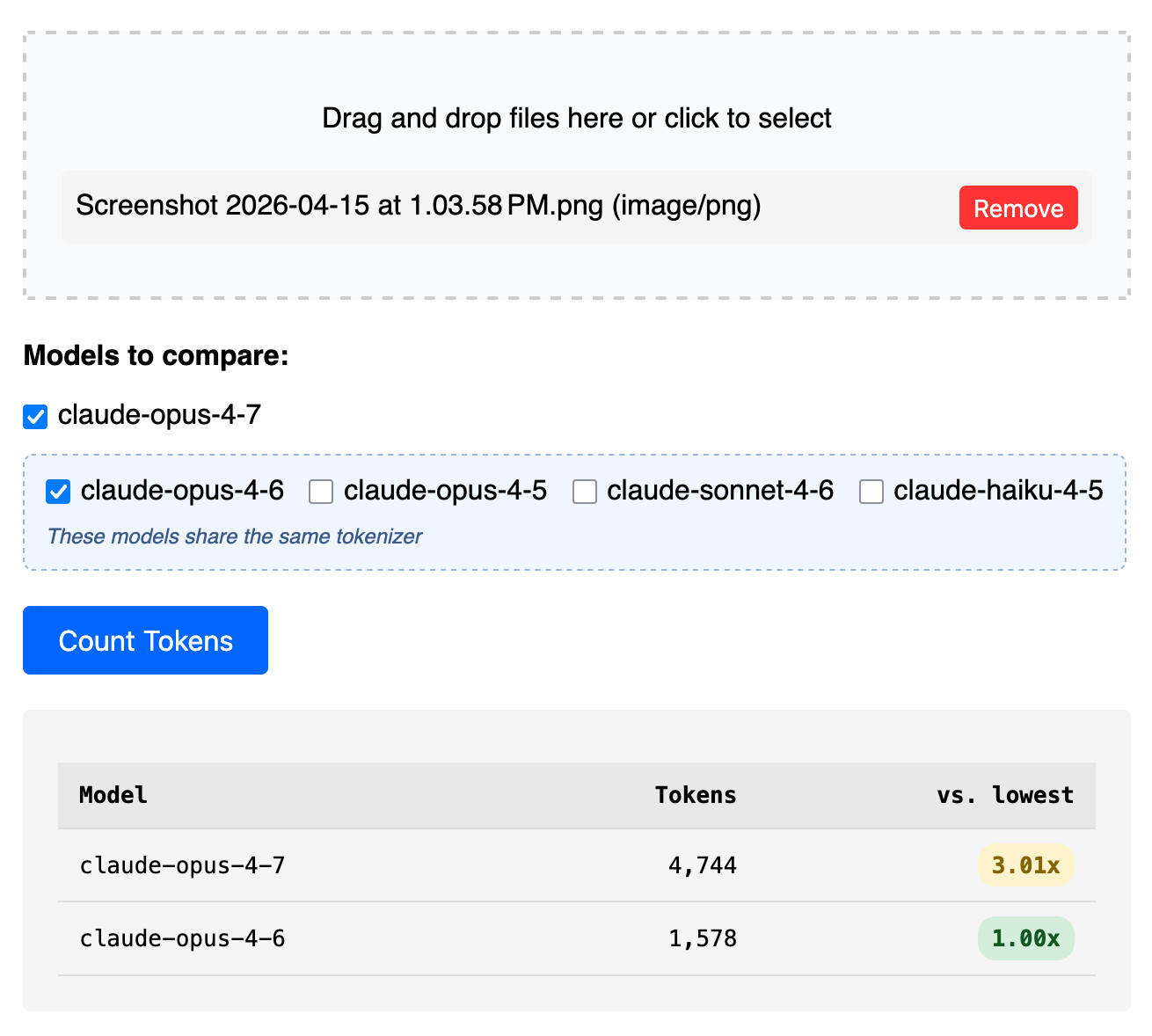

The token counter tool also accepts images. Opus 4.7 has improved image support, described like this:

Opus 4.7 has better vision for high-resolution images: it can accept images up to 2,576 pixels on the long edge (~3.75 megapixels), more than three times as many as prior Claude models.

I tried counting tokens for a 3456 × 2234 pixel 3.7MB PNG and got an even bigger increase in token counts - 3.01x times the number of tokens for 4.7 compared to 4.6:

Recent articles

- Changes in the system prompt between Claude Opus 4.6 and 4.7 - 18th April 2026

- Join us at PyCon US 2026 in Long Beach - we have new AI and security tracks this year - 17th April 2026

- Qwen3.6-35B-A3B on my laptop drew me a better pelican than Claude Opus 4.7 - 16th April 2026

This is a link post by Simon Willison, posted on 20th April 2026.

ai 1969 generative-ai 1746 llms 1713 anthropic 273 claude 270 llm-pricing 68 tokenization 12Monthly briefing

Sponsor me for $10/month and get a curated email digest of the month's most important LLM developments.

Pay me to send you less!

Sponsor & subscribe

![Runtime security for AI agents: risk scoring, policy enforcement, and rollback for production agent pipeline [P]](/_next/image?url=https%3A%2F%2Fpreview.redd.it%2Fjaatbenjg9wg1.jpg%3Fwidth%3D140%26height%3D80%26auto%3Dwebp%26s%3D43ed5a4d6806da42e7feccd461f2fe78add2eae0&w=3840&q=75)