HeLa-Mem: Hebbian Learning and Associative Memory for LLM Agents

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper highlights that LLM agents struggle with long-term coherence because fixed context windows and embedding-based retrieval often miss the associative structure of human memory.

- HeLa-Mem introduces a bio-inspired memory architecture using Hebbian learning, representing memories as a dynamic graph governed by mechanisms akin to association, consolidation, and spreading activation.

- It uses a dual-level design: an episodic memory graph that updates from co-activation patterns, and a semantic store built via “Hebbian Distillation” by a Reflective Agent that extracts structured knowledge from dense memory hubs.

- Experiments on LoCoMo show HeLa-Mem outperforms baselines across four question categories while using significantly fewer context tokens, and the authors provide an open-source GitHub repository.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

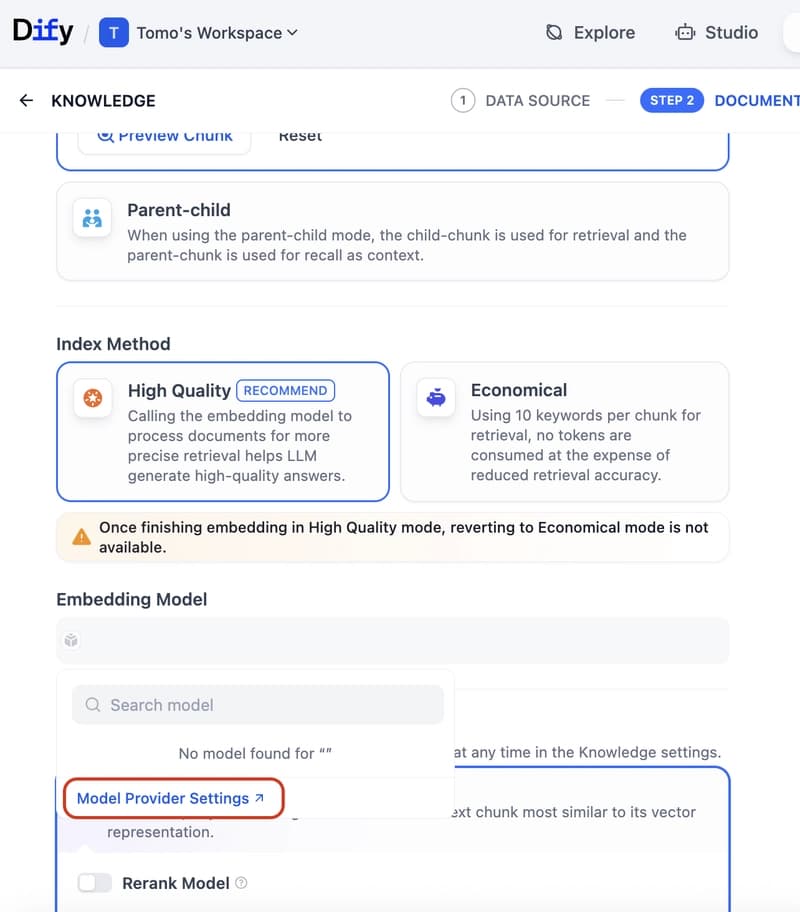

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to