Please refuse to answer me! Mitigating Over-Refusal in Large Language Models via Adaptive Contrastive Decoding

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper examines the “over-refusal” problem in safety-aligned LLMs, where models refuse even harmless requests and existing methods struggle to keep low refusal rates for benign queries while staying strict for malicious ones.

- It observes that in over-refusal cases, non-refusal tokens still appear in the next-token candidate list but the model systematically fails to select them, even as refusal tokens are generated.

- The authors propose AdaCD (Adaptive Contrastive Decoding), a training-free and model-agnostic method that adjusts refusal behavior by contrasting output distributions with and without an extreme safety system prompt.

- AdaCD adaptively adds or removes the refusal-token distribution during decoding, boosting the probability of either refusal or non-refusal tokens as appropriate.

- Experiments on five benchmark datasets show that AdaCD lowers the refusal ratio for over-refusal (harmless) queries by an average of 10.35% while only slightly increasing refusal ratio for malicious queries by 0.13%.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

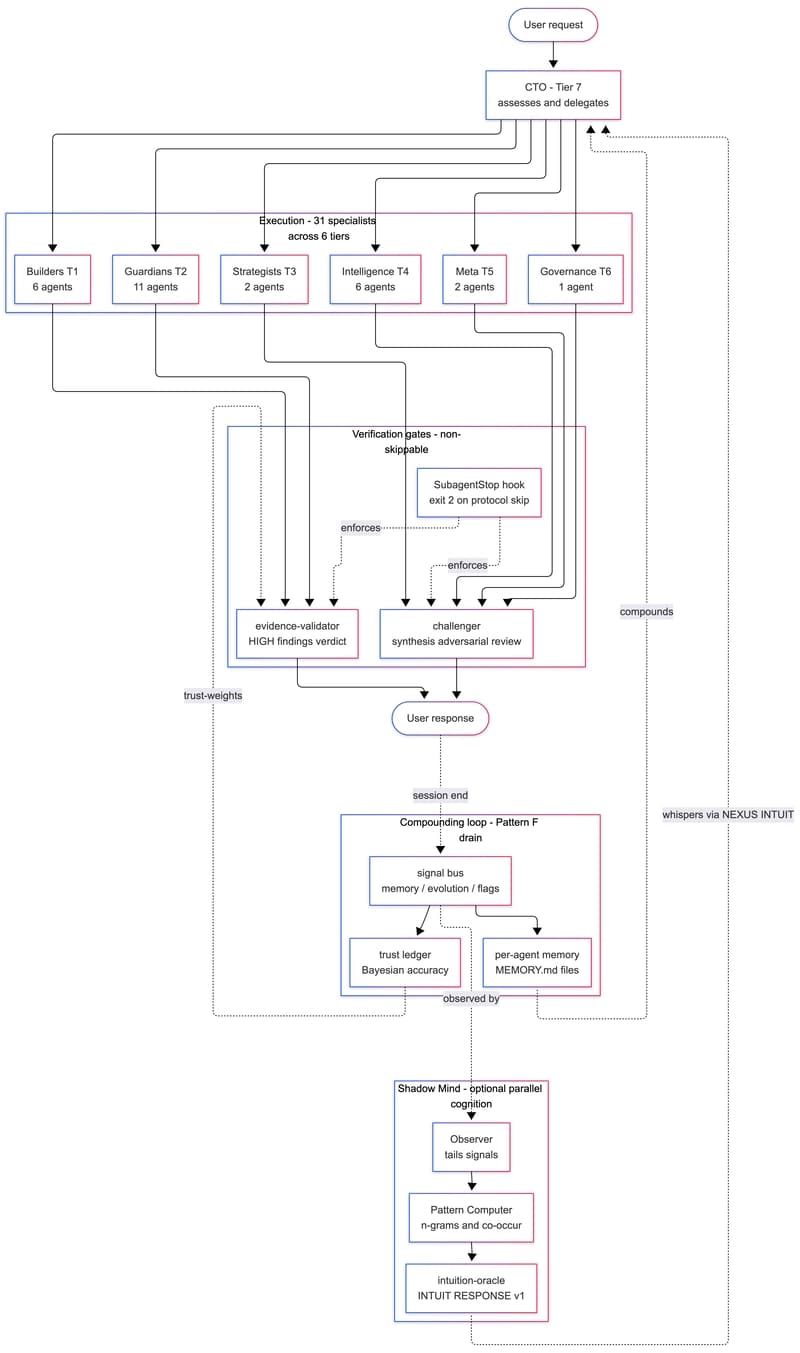

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to