Bias-constrained multimodal intelligence for equitable and reliable clinical AI

arXiv cs.CV / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces BiasCareVL, a bias-aware multimodal learning framework for clinical AI that integrates bias control into the model design instead of relying on post hoc correction.

- It uses adaptive uncertainty modeling and optionally human-in-the-loop refinement to limit the impact of dominant data patterns and improve equitable reasoning under real-world distribution shifts.

- BiasCareVL is trained on 3.44 million samples across more than 15 imaging modalities and supports multiple clinical tasks (visual question answering, classification, segmentation, and report generation) in a unified representation space.

- Across eight public benchmarks in dermatology, oncology, radiology, and pathology, it outperforms 20 state-of-the-art methods, including >10% accuracy gains for multi-class skin lesion diagnosis and >20% Dice improvements for small tumor segmentation.

- The authors report diagnostic performance that exceeds human accuracy (with board-certified radiologists) while requiring substantially less time, and they open-source the framework to encourage transparency and reproducibility.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

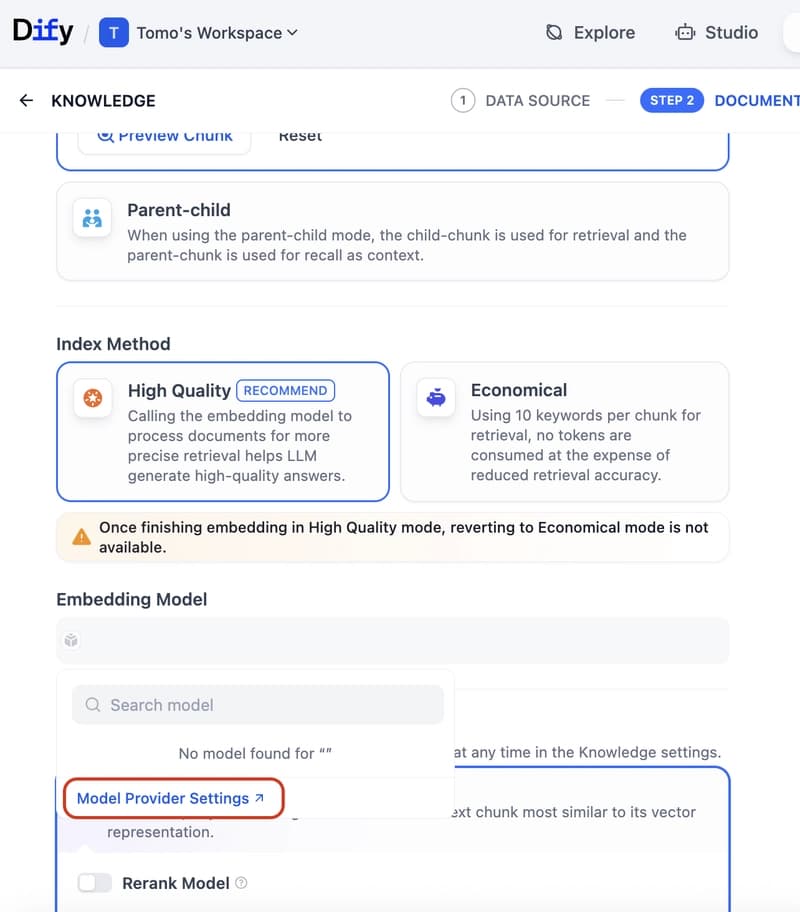

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to