LAGS: Low-Altitude Gaussian Splatting with Groupwise Heterogeneous Graph Learning

arXiv cs.CV / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces LAGS (Low-Altitude Gaussian Splatting), which reconstructs 3D scenes by aggregating aerial images from distributed drones, but highlights inefficiency in current resource allocation due to ignoring viewpoint-induced image diversity.

- It proposes GW-HGNN (groupwise heterogeneous graph neural network) to allocate drone image transmissions by modeling how different image groups non-uniformly contribute to reconstruction, balancing reconstruction fidelity against transmission cost.

- The method reframes LAGS losses and communication constraints as graph learning costs and performs dual-level message passing to learn the allocation policy.

- Experiments on real-world LAGS datasets show GW-HGNN achieves significantly better rendering quality than existing benchmarks on PSNR, SSIM, and LPIPS.

- The approach also cuts computational latency by roughly 100× versus the MOSEK solver, enabling millisecond-level inference for real-time deployment.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

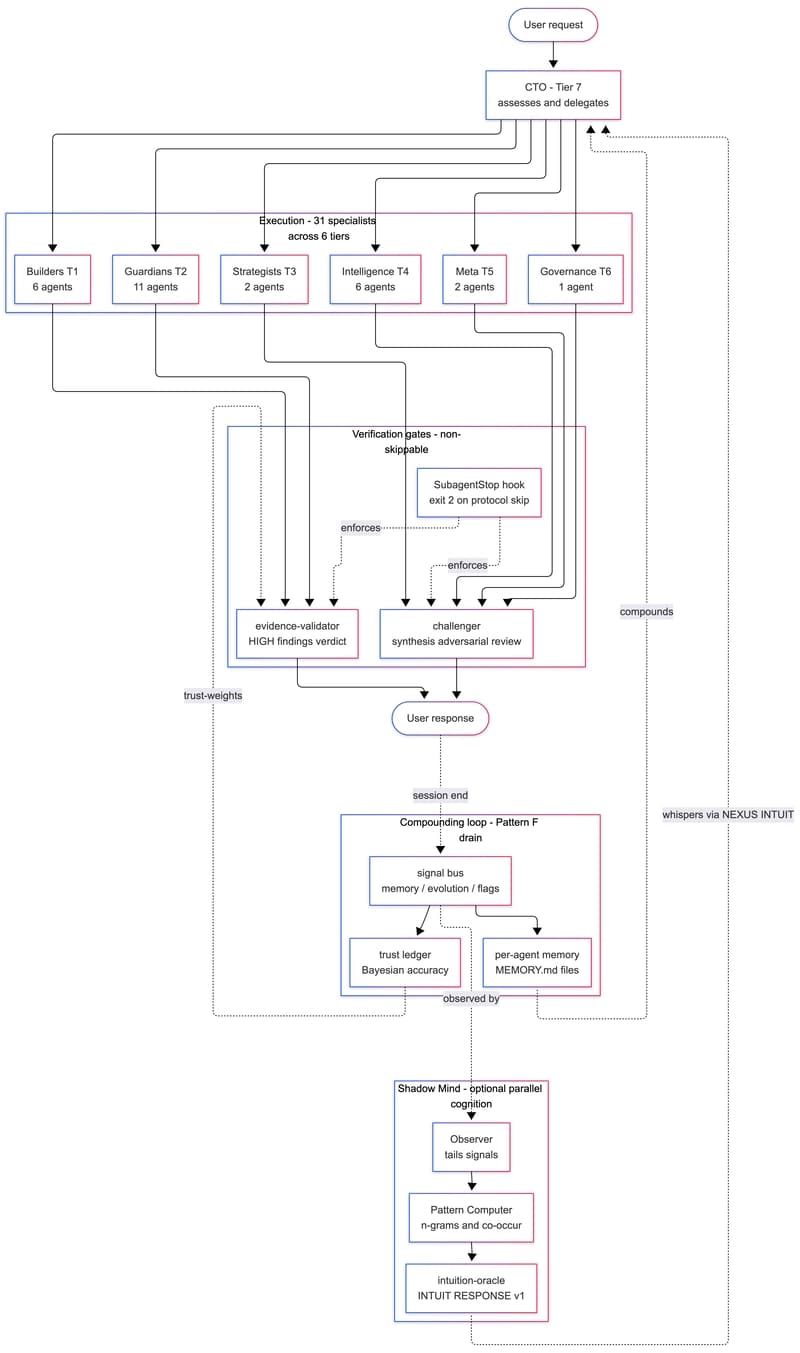

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to