Quantifying Multimodal Capabilities: Formal Generalization Guarantees in Pairwise Metric Learning

arXiv cs.LG / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper provides a fine-grained theoretical study of generalization in multimodal metric learning, focusing on how missing or redundant modalities affect performance in real-world settings.

- It builds hierarchical function-class relationships across different modality subsets and quantifies discrepancies between learned mappings and the ground truth.

- The authors analyze pairwise complexity to derive new generalization error bounds, showing how both the number of modalities and their granularity jointly influence model performance.

- The results include matching upper and lower bounds, indicating that using more fine-grained modality features can reduce hypothesis-space complexity by improving modality complementarity.

- The work connects theory to practice by offering implications for faster convergence rates and higher accuracy in multimodal learning systems.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

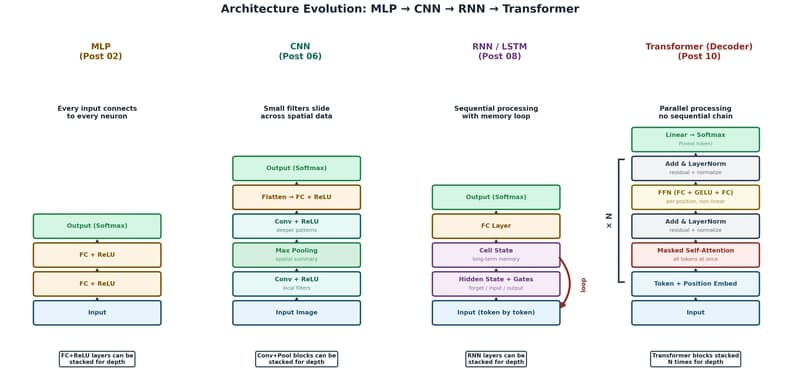

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to