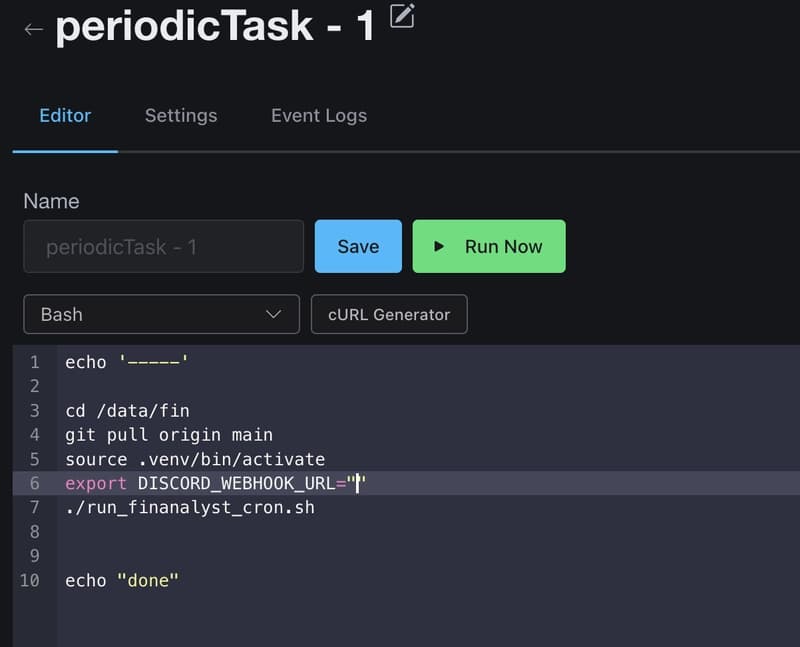

I’ve spent more than two years building an agentic AI platform, working daily with GPT, Claude, and lately Gemini LLM models in real-world production code. They’re powerful; but if you watch closely, you’ll see something unsettling.

They don’t just write bad code.

They write our code.

And that should worry you.

This is what I realized in the mirror we trained.

[link] [comments]