Beyond Overlap Metrics: Rewarding Reasoning and Preferences for Faithful Multi-Role Dialogue Summarization

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The paper argues that current multi-role dialogue summarization methods over-optimize surface similarity metrics (e.g., ROUGE/BERTScore) instead of improving faithfulness and alignment with human preferences.

- It introduces a reasoning-aware framework that distills step-by-step “cognitive-style” reasoning traces from a large teacher model, then uses staged supervised fine-tuning to initialize a summarizer.

- The approach further applies GRPO with a dual-principle reward that combines metric-based signals with human-aligned criteria covering information coverage, implicit inference, factual faithfulness, and conciseness.

- Experiments on multilingual benchmarks show comparable ROUGE/BERTScore to strong baselines, with stronger improvements in factual faithfulness and preference alignment (notably on SAMSum) and stability in semantic consistency (on CSDS).

- The authors provide checkpoints and datasets via a Hugging Face collection, enabling replication and further research.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat USA

AI Business

Capsule Security Emerges From Stealth With $7 Million in Funding

Dev.to

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

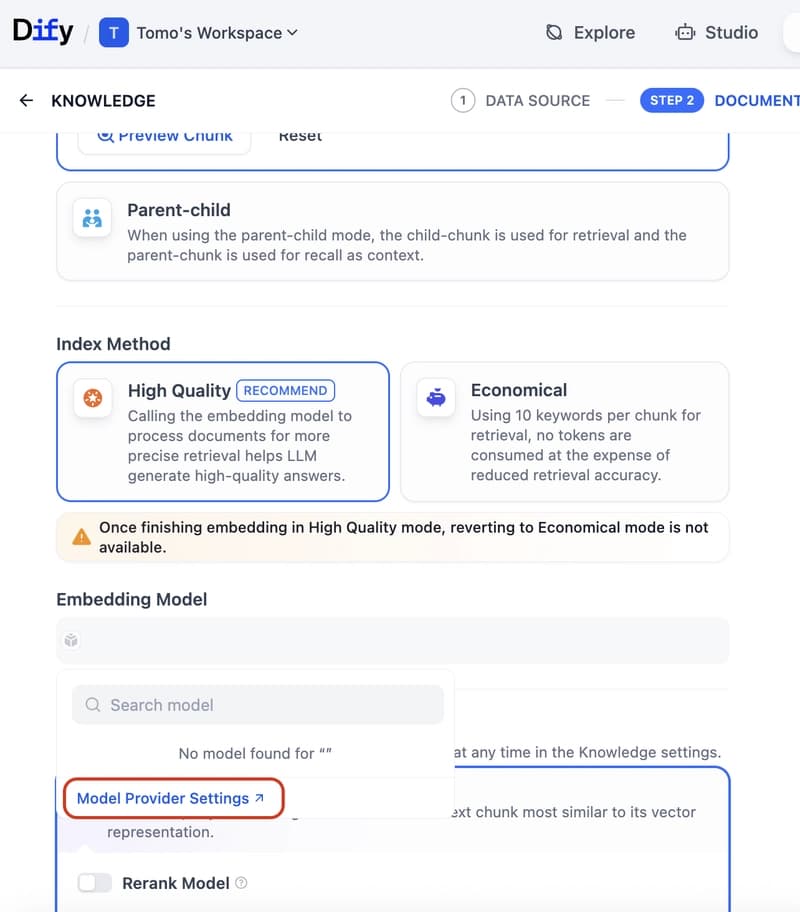

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to