Remask, Don't Replace: Token-to-Mask Refinement in Masked Diffusion Language Models

arXiv cs.CL / 4/22/2026

📰 NewsModels & Research

Key Points

- The paper analyzes masked diffusion language models that use Token-to-Token (T2T) editing to overwrite confidently wrong tokens, and identifies three structural failure modes in this rule.

- It proposes Token-to-Mask (T2M) remasking, which resets a suspect position back to the mask state so the model can re-predict it during the next denoising step using an in-distribution context.

- T2M is training-free, changes only the editing procedure, adds no new parameters, and includes three detection heuristics to decide when to trigger remasking.

- Experiments across eight benchmarks show T2M improves exact token-level accuracy, with its biggest gain being +5.92 points on CMATH by repairing a substantial share of “last-mile” corrupted final answers.

- The authors also provide a theoretical rationale for why a mask conditioning signal is more effective than conditioning on an erroneous committed token.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

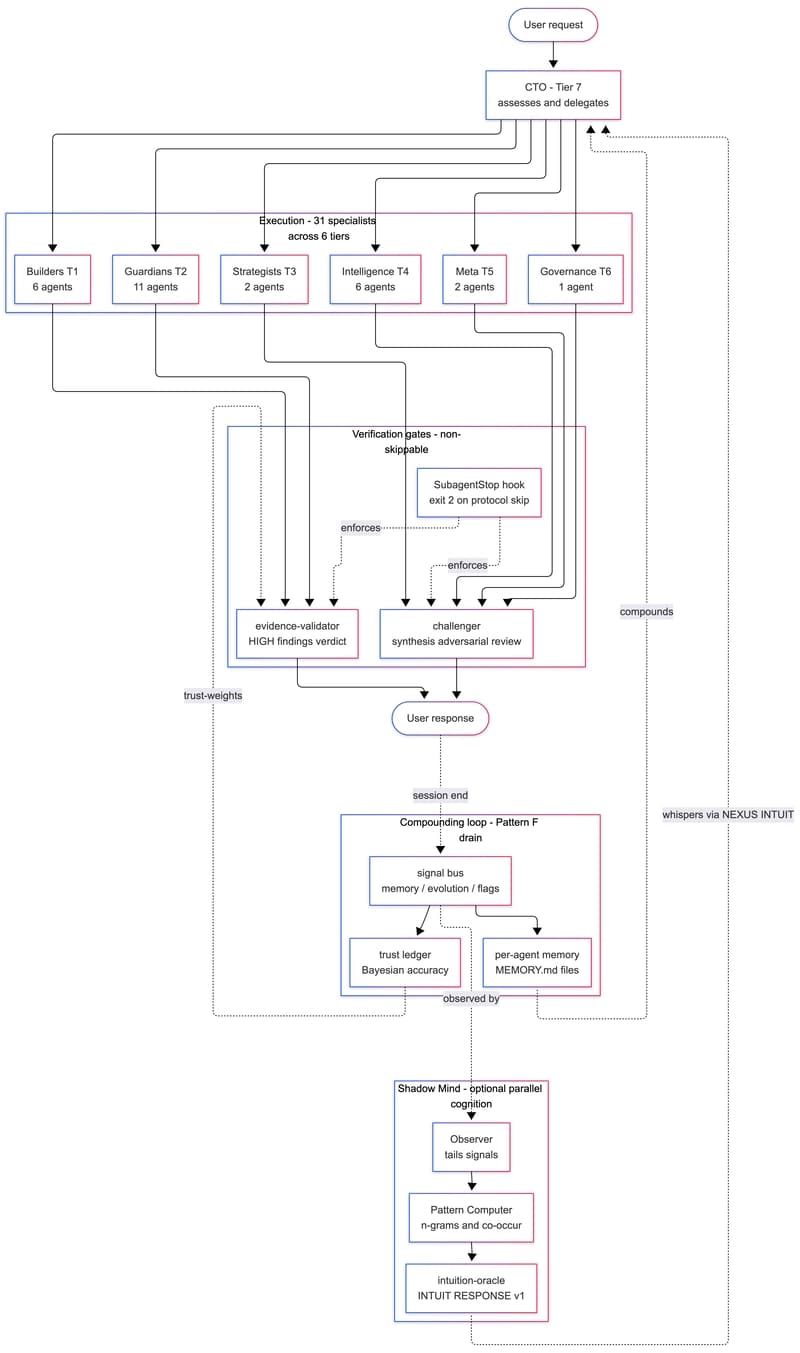

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to