GRAVITY: Architecture-Agnostic Structured Anchoring for Long-Horizon Conversational Memory

arXiv cs.CL / 5/5/2026

📰 NewsModels & Research

Key Points

- The GRAVITY method introduces a plug-and-play structured memory module for long-horizon conversational agents, aiming to add relational, temporal, and thematic structure to retrieved context.

- It derives three representations from raw dialogue: entity profiles using relational graphs, temporal event tuples organized into causal traces, and cross-session topic summaries.

- During generation, GRAVITY injects these representations into the host model’s prompt as structured “anchoring” contexts without requiring any changes to the host model architecture.

- Experiments on LongMemEval and LoCoMo using five different memory systems show consistent improvements, with an average 7.5–10.1% gain in LLM-judge accuracy.

- The performance gains are larger for weaker baselines (about 12.2% for the weakest host) and smaller but still positive for strong baselines (3.8–5.7%), suggesting broad applicability.

Related Articles

Singapore's Fraud Frontier: Why AI Scam Detection Demands Regulatory Precision

Dev.to

From OOM to 262K Context: Running Qwen3-Coder 30B Locally on 8GB VRAM

Dev.to

Nano Banana Pro vs DALL-E 3 vs Midjourney: A Practical Comparison From Someone Who Actually Uses All Three

Dev.to

LLMs edited 86 human essays toward a semantic cluster not occupied by any human writer [D]

Reddit r/MachineLearning

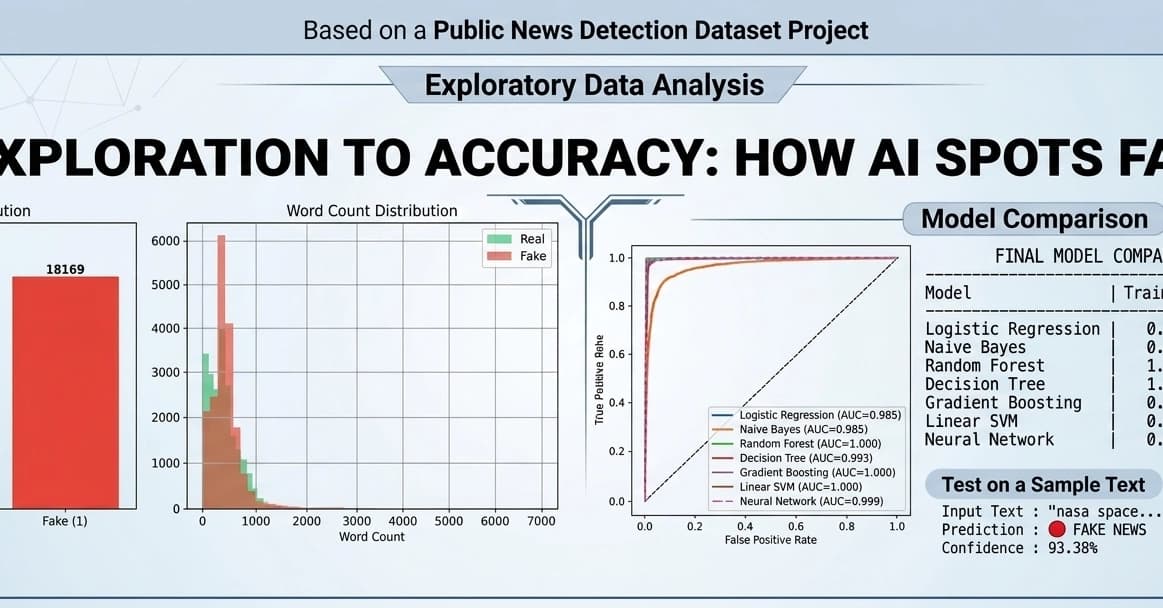

Fake News Detection using Machine Learning & NLP!

Dev.to