Saw the Qwen3-TTS thread this morning and it finally pushed me to write this up.

Background: ive been building a local voice assistant for a client over the past 3 weeks. Voice-first interface on top of a RAG backend -- use case is an AI assistant where they need responses that feel conversational, not a typing test where you wait for the cursor to stop.

TTS was the weak link. Tried Kokoro first, which is solid for narration but gets flat on short phrases like "got it" or "sure, one sec" -- the kind of back and forth that dominates voice interfaces. XTTS-v2 was more expressive but cold start latency was sometimes 4-6 seconds depending on GPU state, which kills the flow.

Swapped in Qwen3-TTS this past week and the difference is real. Expressiveness on question intonation improved noticeably. Proper nouns and acronyms are still a bit inconsistent, but for general conversation it doesnt feel robotic anymore -- first local TTS model where ive been able to just leave it running without the urge to swap something.

On the LLM side: [Qwen3.6-35B-A3B](https://huggingface.co/Qwen/Qwen3.6-35B-A3B). The thinking preservation across turns is what makes it actually work for voice sessions. Previous reasoning carries forward so multi-turn context compounds instead of resetting every time. Matters a lot when users reference something from 7 exchanges ago.

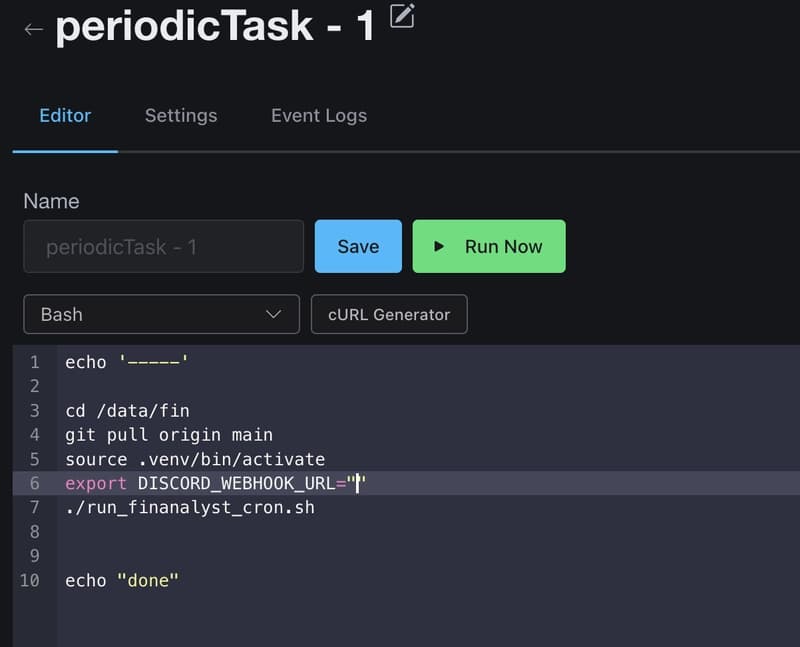

Full pipeline is whisper -> qwen3.6 -> qwen3-TTS. Round trip latency is workable. Not instant, but it doesnt feel like a broken pause mid-sentence.

One thing still unsolved: tool calls inside the voice loop. When the user asks something that needs a retrieval step, there's a gap before TTS can start. Haven't found a clean way to stream partial response text before the tool result comes back. If anyone's gotten that working, genuinely curious how.

[link] [comments]