Jailbreaking Large Language Models with Morality Attacks

arXiv cs.CL / 4/21/2026

📰 NewsSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces a new study that uses jailbreak attacks to probe how LLMs internalize and apply pluralistic moral values.

- It constructs a morality dataset (10.3K instances) covering two challenge types: value ambiguity and value conflict.

- The authors formalize four adversarial attack methods to manipulate LLMs’ judgments on morality-related questions.

- Experiments evaluate both base LLMs and “guardrail” models used in generative systems, finding a critical vulnerability to these moral-aware attacks.

Related Articles

The 2026 Forbes AI 50 List

Reddit r/artificial

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

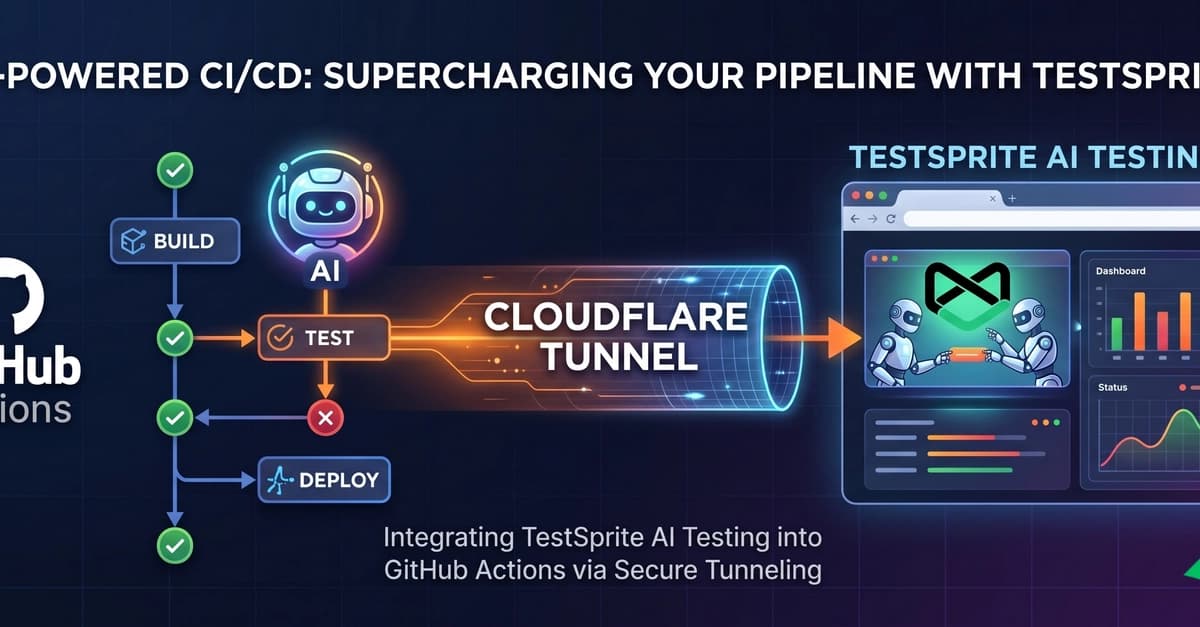

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

Claude and I aren't vibing at all

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to