EmbodiedLGR: Integrating Lightweight Graph Representation and Retrieval for Semantic-Spatial Memory in Robotic Agents

arXiv cs.RO / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper proposes EmbodiedLGR-Agent, a visual-language model (VLM)-driven robotic agent that builds and retrieves semantic-spatial memory more efficiently than prior approaches.

- It uses a hybrid memory strategy: parameter-efficient VLMs store low-level object and position information in a semantic graph, while traditional retrieval-augmented components preserve higher-level scene descriptions.

- Experiments on the NaVQA dataset show state-of-the-art performance with faster inference and querying times for embodied agents, while keeping competitive overall task accuracy.

- The system was also deployed on a physical robot, demonstrating practical value for human-robot interaction and running the VLM and retrieval pipeline locally.

Related Articles

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

Building a website with Replit and Vercel

Dev.to

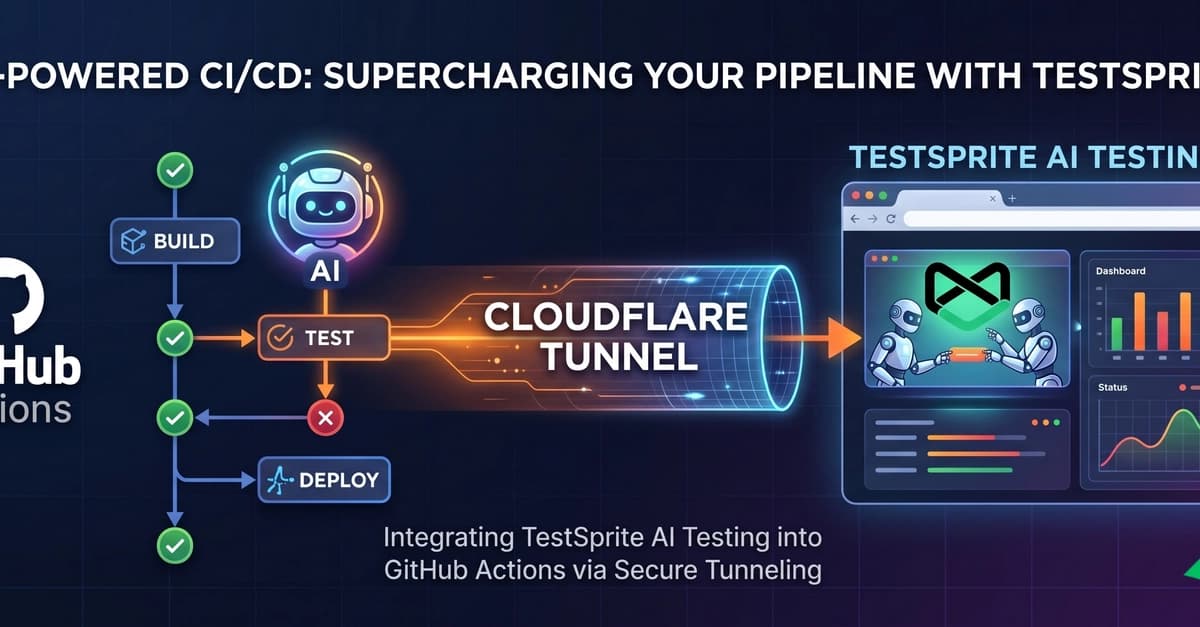

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to

Kiwi-chan Devlog #007: The Audit Never Sleeps (and Neither Does My GPU)

Dev.to