![Zero-shot World Models Are Developmentally Efficient Learners [R]](https://preview.redd.it/px240r8jkuvg1.png?width=640&crop=smart&auto=webp&s=170ec87b0674ce319a19041e12e9b8cd8ff31953) | Today's best AI needs orders of magnitude more data than a human child to achieve visual competence. The paper introduces the Zero-shot World Model (ZWM), an approach that substantially narrows this gap. Even when trained on a single child's visual experience, BabyZWM matches state-of-the-art models on diverse visual-cognitive tasks – with no task-specific training, i.e., zero-shot. The work presents a blueprint for efficient and flexible learning from human-scale data, advancing a path toward data-efficient AI systems. Full Twitter post: https://x.com/khai_loong_aw/status/2044051456672838122?s=20 HuggingFace: https://huggingface.co/papers/2604.10333 GitHub: https://github.com/awwkl/ZWM [link] [comments] |

Zero-shot World Models Are Developmentally Efficient Learners [R]

Reddit r/MachineLearning / 4/18/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes a Zero-shot World Model (ZWM) aimed at reducing the massive data gap between current AI systems and human visual learning.

- BabyZWM is trained on the visual experience from a single child and is evaluated across multiple visual-cognitive tasks without any task-specific training (zero-shot).

- The authors report that BabyZWM can match state-of-the-art models on diverse tasks despite using human-scale, limited training data.

- The work outlines a blueprint for building data-efficient and flexible AI systems, supporting a path toward learning from fewer examples.

- Links to the paper, Hugging Face entry, and an accompanying GitHub repository are provided for further exploration and implementation details.

Related Articles

The myth of Claude Mythos crumbles as small open models hunt the same cybersecurity bugs Anthropic showcased

THE DECODER

Claude Opus 4.7 vs 4.6: What Actually Changed and What Breaks on Migration

Dev.to

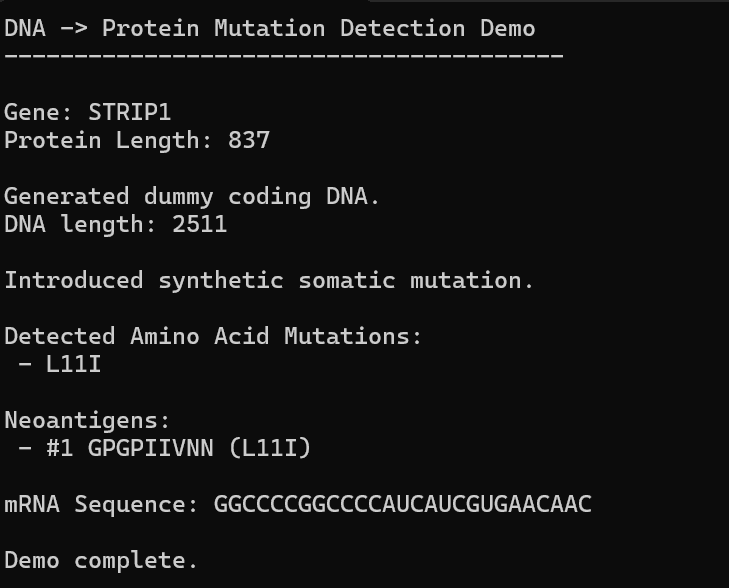

AI, Hope, and Healing: Can We Build Our Own Personalized mRNA Cancer Vaccine Pipeline?

Dev.to

The Hotel AI Visibility Crisis: Why AI Cites Review Sites More Than Your Own Website

Dev.to

Automate Your Literature Review: Build a Custom AI Pipeline

Dev.to