From Exposure to Internalization: Dual-Stream Calibration for In-context Clinical Reasoning

arXiv cs.AI / 4/10/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper argues that existing in-context learning and RAG approaches often expose models to clinical knowledge but do not achieve true “contextual internalization” that adjusts internal representations per case at inference time.

- It introduces Dual-Stream Calibration (DSC), a test-time training framework with two coordinated calibration streams: a semantic stream that stabilizes generation by minimizing entropy over key evidence and a structural stream that learns latent inferential dependencies via iterative meta-learning.

- DSC trains on specialized support sets during inference to better align external clinical evidence with the model’s internal logic, moving beyond passive attention-based matching toward active refinement of the latent reasoning space.

- Experiments on thirteen clinical datasets show DSC outperforming multiple baselines across three task paradigms, including both training-dependent models and other test-time learning methods.

- Overall, the work presents a reasoning-focused calibration method aimed at improving robustness and coherence of LLM-based clinical reasoning under heterogeneous real-world records.

Related Articles

Black Hat Asia

AI Business

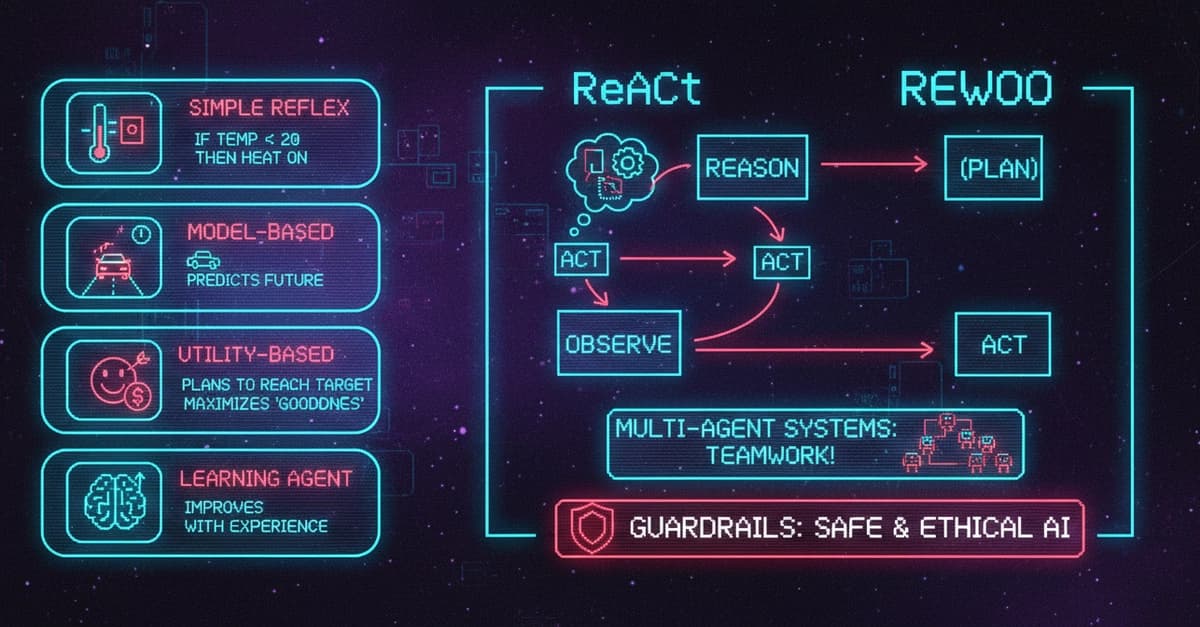

AI Agents Explained: 5 Types, Components, Frameworks, and Real-World Use Cases

Dev.to

Edge-to-Cloud Swarm Coordination for circular manufacturing supply chains with embodied agent feedback loops

Dev.to

Why QIS Is Not a Sync Problem: The Mailbox Model for Distributed Intelligence

Dev.to

The Ethics of AI: A Developer's Responsibility

Dev.to