Do LLMs Know What They Know? Measuring Metacognitive Efficiency with Signal Detection Theory

arXiv cs.CL / 3/27/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper argues that common LLM confidence calibration metrics (e.g., ECE, Brier score) mix two abilities—Type-1 sensitivity (how much the model knows) and Type-2 metacognitive sensitivity (how well it knows what it knows).

- It proposes an evaluation framework using Type-2 Signal Detection Theory, introducing meta-d' and an M-ratio to separately measure metacognitive capacity and metacognitive efficiency.

- Experiments on four LLMs across 224,000 factual QA trials show large differences in metacognitive efficiency even when Type-1 sensitivity is similar, including cases where a model ranks highest by d' but lowest by M-ratio.

- The study finds metacognitive efficiency is domain-specific and can be shifted by temperature changes, indicating that confidence policy (Type-2 criterion) can move independently of underlying metacognitive capacity for some models.

- It reports that AUROC_2 and M-ratio can produce fully inverted model rankings, suggesting these metrics answer fundamentally different evaluation questions, with implications for model selection and deployment.

Continue reading this article on the original site.

Read original →💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

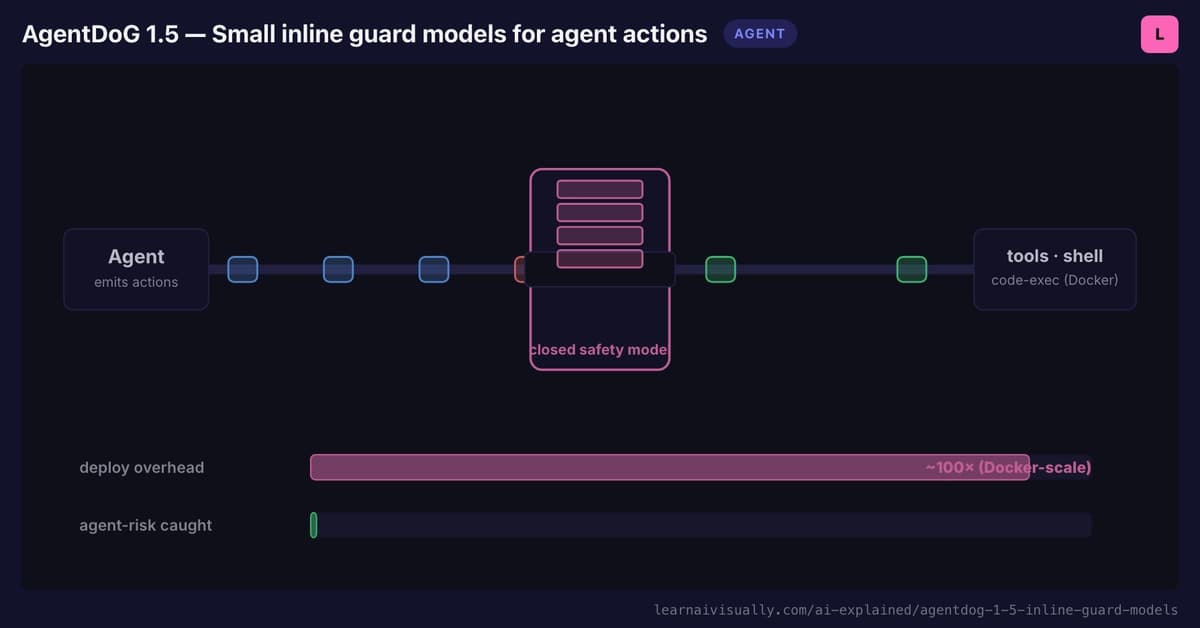

AgentDoG 1.5: Small Inline Guard Models for Agent Actions

Dev.to

Every handle invocation on BizNode gets a WFID — a universal transaction reference for accountability. Full audit trail,...

Dev.to

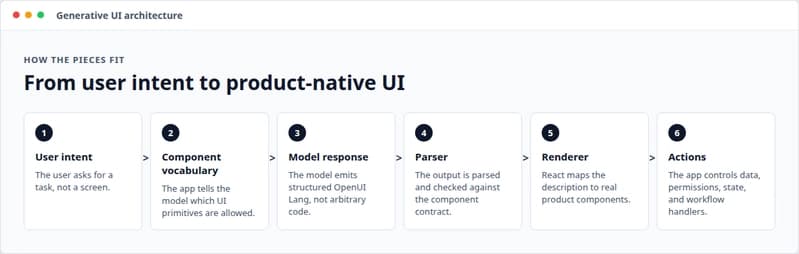

What Is Generative UI? (And Why Text Output Is No Longer Enough)

Dev.to

tried to write a journal entry without AI for the first time in like a year and kinda panicked

Reddit r/artificial

GitLab Just Reorganised Its Entire R&D Into 60 Autonomous AI Teams. Here Is What That Signals.

Dev.to