Affordance-R1: Reinforcement Learning for Generalizable Affordance Reasoning in Multimodal Large Language Model

arXiv cs.RO / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces Affordance-R1, a multimodal reinforcement-learning framework for affordance grounding that predicts action-relevant object regions for robots.

- It argues that prior affordance models struggle with out-of-domain generalization because they lack Chain-of-Thought (CoT)-style reasoning, and it addresses this with a CoT-guided GRPO (Group Relative Policy Optimization) approach.

- The method uses a structured affordance function with separate format, perception, and cognition rewards to steer RL optimization, and it is trained end-to-end without relying on explicit reasoning data.

- The authors build an affordance-focused training dataset (ReasonAff) and report strong zero-shot generalization, open-world generalization, and emergent test-time reasoning behavior.

- Code and the dataset are released on GitHub, enabling others to reproduce and extend the approach.

Related Articles

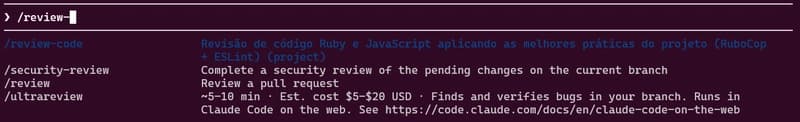

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to