| There's been quite a few case studies recently on agents building whole programs from scratch, but most of them test a single or just a few projects with hand-tuned setups. We've spent the last couple of months formalizing this setting and building a benchmark of 200 tasks while doubling down on testing, cheat prevention, and task diversity. Our agent ONLY gets a target executable and some readme/usage files. The agent must choose a language, design abstraction layers, and architect the entire program. No internet access or any other way of cheating. No decompilation. We've also spent some 50k to generate 6M lines of behavioral tests and then filtered them down to keep the best ones. Because they are just testing executables as a black box, we do not make any assumptions on even the language that the LM uses to implement the program. All of the results are at programbench.com . There's also a big FAQ at the bottom. We've just open-sourced our github, huggingface and docker images. Essentially you can just start evaluating with Github is at https://github.com/facebookresearch/programbench Sorry that it's just closed source models right now, we have a few open-source models in the pipeline, but so far we've had an even harder time at getting them to behave well with these tasks (open source models tend to be somewhat more overfitted to things like SWE-bench, so they often have a harder time with new benchmarks). We're also planning to open the benchmark for submissions quite soon, similar to what we did on SWE-bench and its variants. [link] [comments] |

ProgramBench: Can we really rebuild huge binaries from scratch? (doesn't look like it)

Reddit r/LocalLLaMA / 5/6/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- ProgramBench introduces a benchmark that evaluates agents’ ability to rebuild large programs from scratch using only a target executable and related README/usage files, with strict anti-cheating constraints (no internet, no decompilation).

- The benchmark contains 200 tasks and emphasizes testing rigor, cheat prevention, and task diversity to ensure fair and varied evaluation.

- To support broad assessment without assumptions about implementation details, the project generates about 6 million lines of behavioral tests and reduces them to the strongest ones, treating the executable as a black box.

- The team has open-sourced the benchmark code, Hugging Face assets, and Docker images, and provides an evaluation workflow via a simple pip-based install and command.

- While the benchmark is currently evaluated with closed-source models, the project plans to open submissions soon and indicates open-source models have had more difficulty behaving well on these tasks.

Related Articles

Black Hat USA

AI Business

Transform Your Blurry Photos into HD Masterpieces, Instantly!

Dev.to

6 New Moats for AI Agent Infrastructure — Trust Score, Deployment, SLA, Identity, Compliance-as-Code

Dev.to

Google Home’s Gemini AI can handle more complicated requests

The Verge

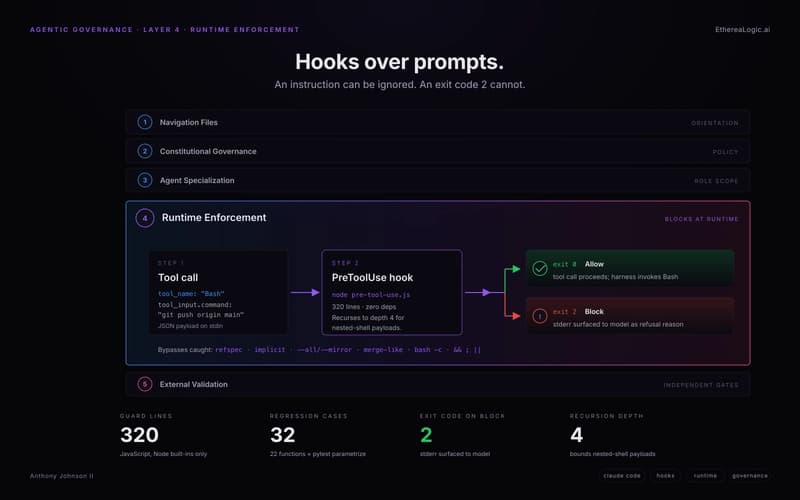

Exit Code 2: How Claude Hooks Turn Agentic Rules Into Runtime Barriers

Dev.to