Technical Analysis: Enhancing Context Recognition in Sensitive Conversations for ChatGPT

Introduction

ChatGPT's ability to understand context in sensitive conversations is crucial for providing accurate and empathetic responses. Currently, the model relies heavily on its training data to recognize context, which may not always be sufficient, especially in sensitive or nuanced conversations. To improve this, we need to delve into the technical aspects of the model and explore potential solutions.

Current Limitations

- Lack of domain-specific knowledge: ChatGPT's training data may not adequately cover sensitive topics, such as mental health, relationships, or trauma. This lack of domain-specific knowledge can lead to inadequate context recognition.

- Insufficient contextual understanding: The model may struggle to understand the nuances of human conversation, including subtle cues, idioms, and figurative language, which can hinder its ability to recognize context.

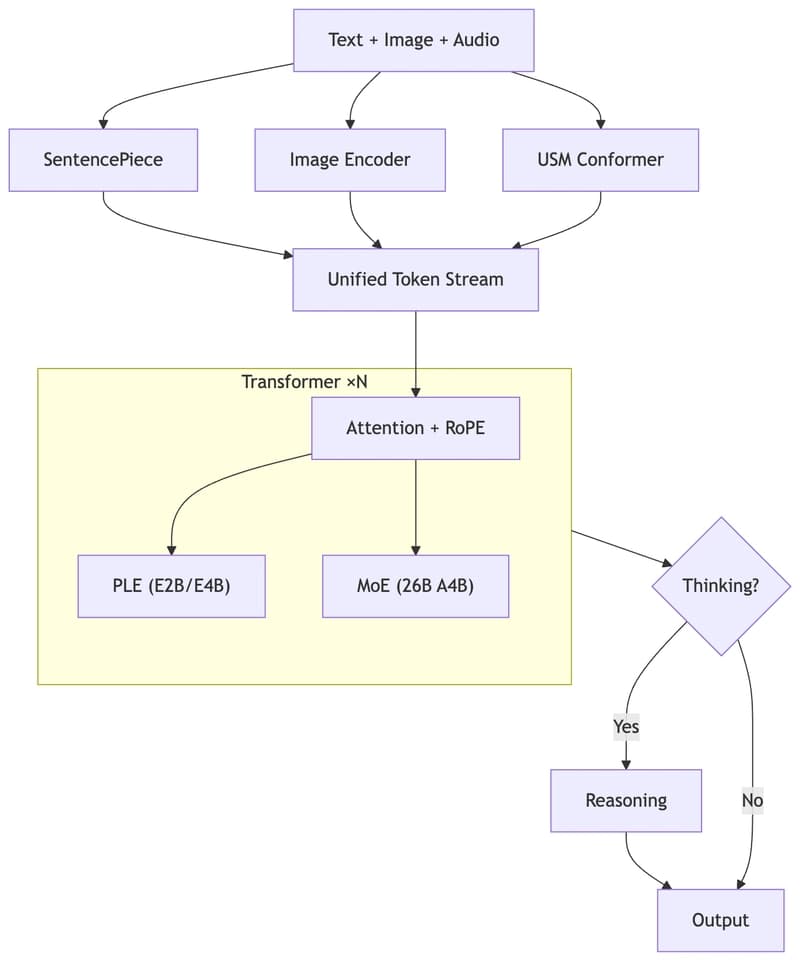

- Overreliance on pattern matching: ChatGPT's current architecture relies heavily on pattern matching, which can lead to oversimplification of complex conversations and inadequate context recognition.

Technical Solutions

- Domain adaptation: Integrate domain-specific knowledge into the model through targeted training datasets, expert feedback, and knowledge graph integration. This will enable the model to better understand the nuances of sensitive topics.

- Contextualized embedding: Utilize contextualized embedding techniques, such as BERT or RoBERTa, to improve the model's ability to capture subtle cues and nuances in human conversation.

- Graph-based contextual understanding: Implement a graph-based approach to represent conversation context, allowing the model to capture relationships between entities, concepts, and emotions. This can be achieved through graph neural networks (GNNs) or knowledge graph embeddings.

- Multi-task learning: Train the model on multiple tasks simultaneously, such as sentiment analysis, emotion recognition, and intent detection, to improve its ability to recognize context in sensitive conversations.

- Human evaluation and feedback: Integrate human evaluation and feedback mechanisms to identify areas where the model struggles to recognize context and provide targeted improvements.

Architecture Modifications

- Hierarchical attention mechanism: Implement a hierarchical attention mechanism to focus on specific aspects of the conversation, such as entities, concepts, or emotions, to improve context recognition.

- Graph-based attention: Utilize graph-based attention mechanisms to capture relationships between entities and concepts in the conversation, enhancing context recognition.

- Memory-augmented architecture: Design a memory-augmented architecture to store and retrieve contextual information, enabling the model to recall relevant information and improve context recognition.

Evaluation Metrics

- Context recognition accuracy: Evaluate the model's ability to recognize context in sensitive conversations using metrics such as accuracy, precision, and recall.

- Human evaluation: Conduct human evaluations to assess the model's performance in sensitive conversations, focusing on empathy, understanding, and overall user experience.

- Conversational flow: Evaluate the model's ability to engage in natural-sounding conversations, using metrics such as conversational flow, coherence, and fluency.

Future Directions

- Emotional intelligence: Develop the model's emotional intelligence by incorporating affective computing and social learning techniques to improve its ability to recognize and respond to emotional cues.

- Explainability and transparency: Integrate explainability and transparency techniques to provide insights into the model's decision-making process, enabling users to understand the context recognition process.

- Continuous learning: Design a continuous learning framework to enable the model to adapt to evolving language use, cultural norms, and sensitive topics, ensuring its context recognition capabilities remain up-to-date and effective.

Omega Hydra Intelligence

🔗 Access Full Analysis & Support