PEANUT: Perturbations by Eigenvalue Alignment for Attacking GNNs Under Topology-Driven Message Passing

arXiv cs.LG / 3/30/2026

📰 NewsSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper argues that GNNs that rely on explicit topology representations (adjacency matrix or Laplacian) are vulnerable to small graph-structure perturbations that can substantially change model outputs in deployment settings.

- It proposes PEANUT, a simple gradient-free, restricted black-box evasion attack that injects virtual nodes to exploit eigenvalue alignment with the GNN’s topology-driven message passing mechanism.

- PEANUT is designed to be practical for real attackers because it operates at inference time, avoids lengthy iterative optimization or parameter learning, and does not require training surrogate models.

- The attack does not need node features on the injected nodes (zero features still work), underscoring that connectivity/structure alone can be sufficient to degrade GNN performance.

- Experiments on multiple real-world datasets across three graph tasks reportedly show PEANUT’s effectiveness despite its simplicity.

Related Articles

Black Hat Asia

AI Business

Freedom and Constraints of Autonomous Agents — Self-Modification, Trust Boundaries, and Emergent Gameplay

Dev.to

The Prompt Tax: Why Every AI Feature Costs More Than You Think

Dev.to

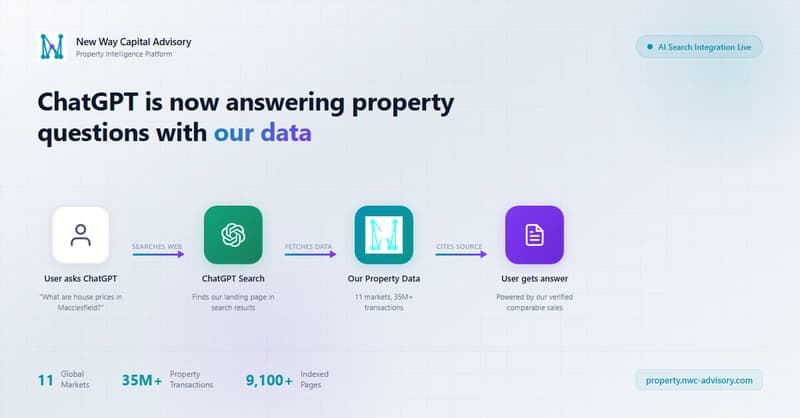

We caught ChatGPT answering property questions with our data -- here's the nginx log proof

Dev.to

[D] Joined UdeM MSCS without MILA affiliation - anyone successfully found a core MILA supervisor in their first semester?

Reddit r/MachineLearning