OmniVoice: Towards Omnilingual Zero-Shot Text-to-Speech with Diffusion Language Models

arXiv cs.CL / 4/3/2026

💬 OpinionSignals & Early TrendsModels & Research

Key Points

- OmniVoice is a large multilingual, zero-shot text-to-speech (TTS) model designed to cover 600+ languages using a diffusion-style discrete non-autoregressive architecture.

- Instead of a two-stage text-to-semantic-to-acoustic pipeline, it directly maps input text to multi-codebook acoustic tokens to avoid bottlenecks on complex setups.

- The model’s training and performance are improved via a full-codebook random masking strategy and by initializing from a pre-trained LLM to boost intelligibility.

- Trained on a fully open-source-curated 581k-hour multilingual dataset, OmniVoice reports state-of-the-art results across Chinese, English, and multilingual benchmarks.

- The authors provide the code and pre-trained models publicly on GitHub, enabling researchers and developers to evaluate and build upon the approach.

Related Articles

Black Hat Asia

AI Business

跳出幸存者偏差,从结构性资源分配解析财富真相

Dev.to

Gemma 4 is seriously broken when using Unsloth and llama.cpp

Reddit r/LocalLLaMA

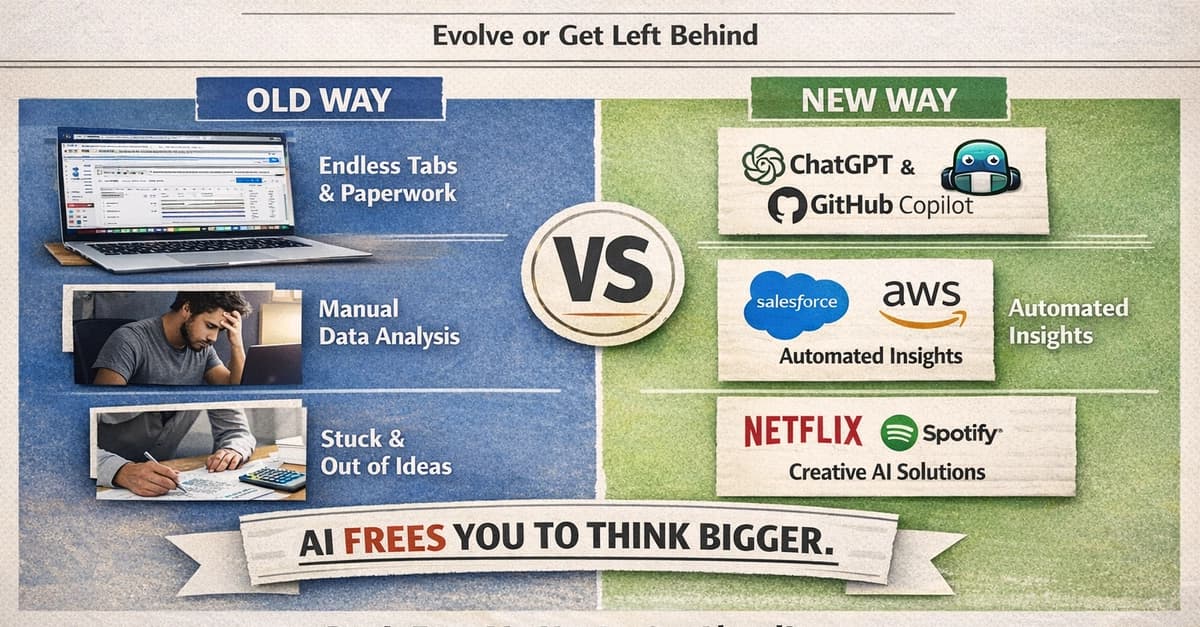

AI vs Old-School Work: My Realization That the Future Won’t Wait

Dev.to

Built an AI “project brain” to run and manage engineering projects solo, how can I make this more efficient?

Reddit r/artificial