LayerCache: Exploiting Layer-wise Velocity Heterogeneity for Efficient Flow Matching Inference

arXiv cs.CV / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper argues that Flow Matching image generation models suffer high inference cost because they repeatedly denoise using large Transformer networks.

- It finds that Transformer layer groups have highly heterogeneous velocity dynamics, with shallow layers being stable enough for caching while deeper layers require full computation.

- It introduces LayerCache, a layer-aware caching framework that partitions the Transformer into layer groups and makes independent caching decisions per group at each denoising step.

- LayerCache includes an adaptive JVP span (K) selection mechanism and uses a timestep/layer-group/K scheduling formulation solved via a greedy budget allocation algorithm.

- Experiments on Qwen-Image (1024×1024, 50 steps) show strong quality-speed gains versus MeanCache and prior caching methods, including +5.38 dB PSNR and a 70% LPIPS reduction with 1.37× speedup.

Related Articles

No Free Lunch Theorem — Deep Dive + Problem: Reverse Bits

Dev.to

Salesforce Headless 360: Run Your CRM Without a Browser

Dev.to

RAG Systems in Production: Building Enterprise Knowledge Search

Dev.to

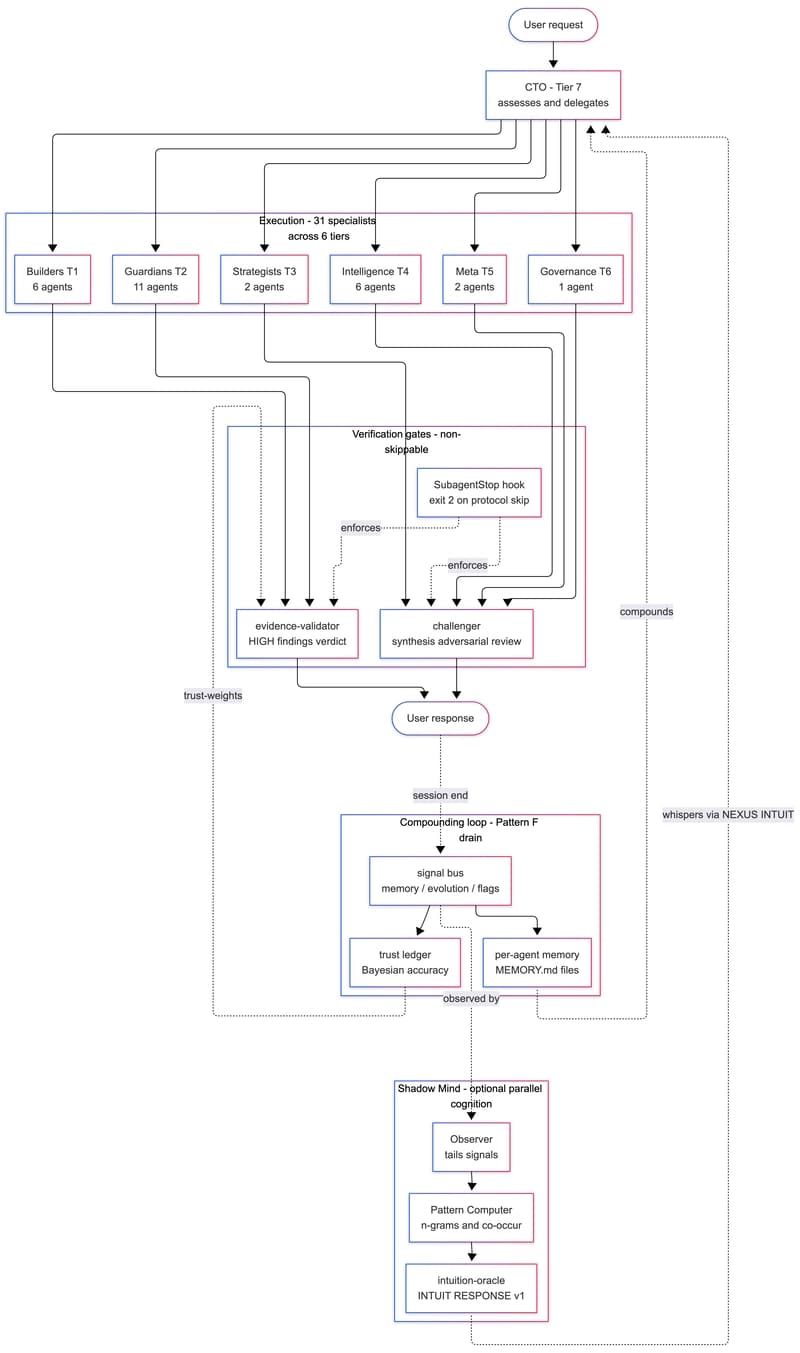

We Built a 31-Agent AI Team That Hires Itself, Critiques Itself, and Dreams

Dev.to

gpt-image-2 API: ship 2K AI images in Next.js for $0.21 (2026)

Dev.to